-

Recurse Center - Batch 3 - Cycle 20250305-20253207 - Debugging

Date: 2025-03-07

Category: rc

-

Recurse Center - Batch 3 - Cycle 20250205-20250207 - Training

Date: 2025-02-07

Category: rc

-

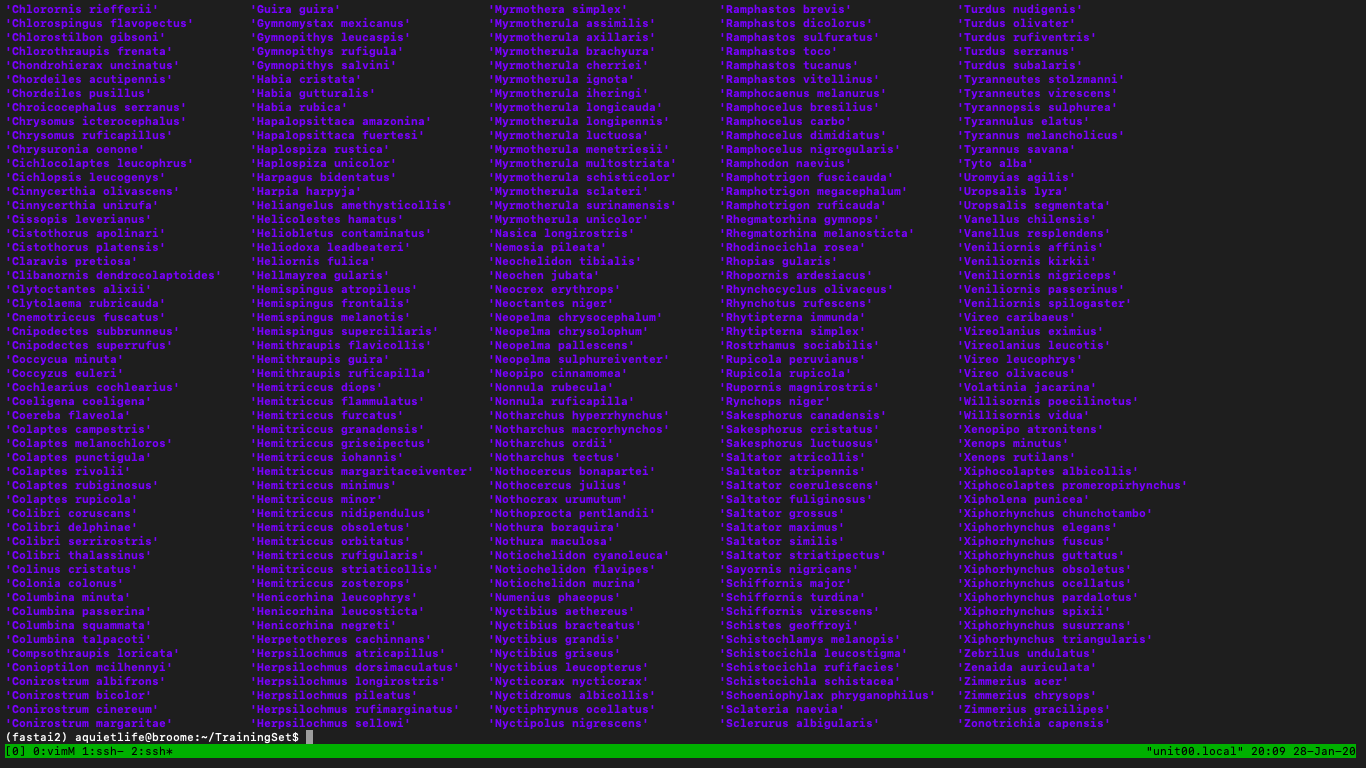

Recurse Center - Batch 3 - Cycle 20250128-20250130 - Processing

Date: 2025-01-30

Category: rc

-

Recurse Center - Batch 3 - Cycle 2025012-20250114 - Decoding

Date: 2025-01-26

Category: rc

-

Recurse Center - Batch 3 - Cycle 2025012-20250114 - Encoding

Date: 2025-01-22

Category: rc

-

Recurse Center - Batch 3 - Cycle 2025012-20250114 - Stagnation

Date: 2025-01-14

Category: rc

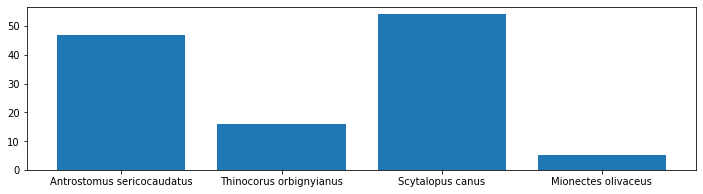

- Research

- Research

-

Introduction exercises in vim from Primagen's Vim As Your Editor series.

-

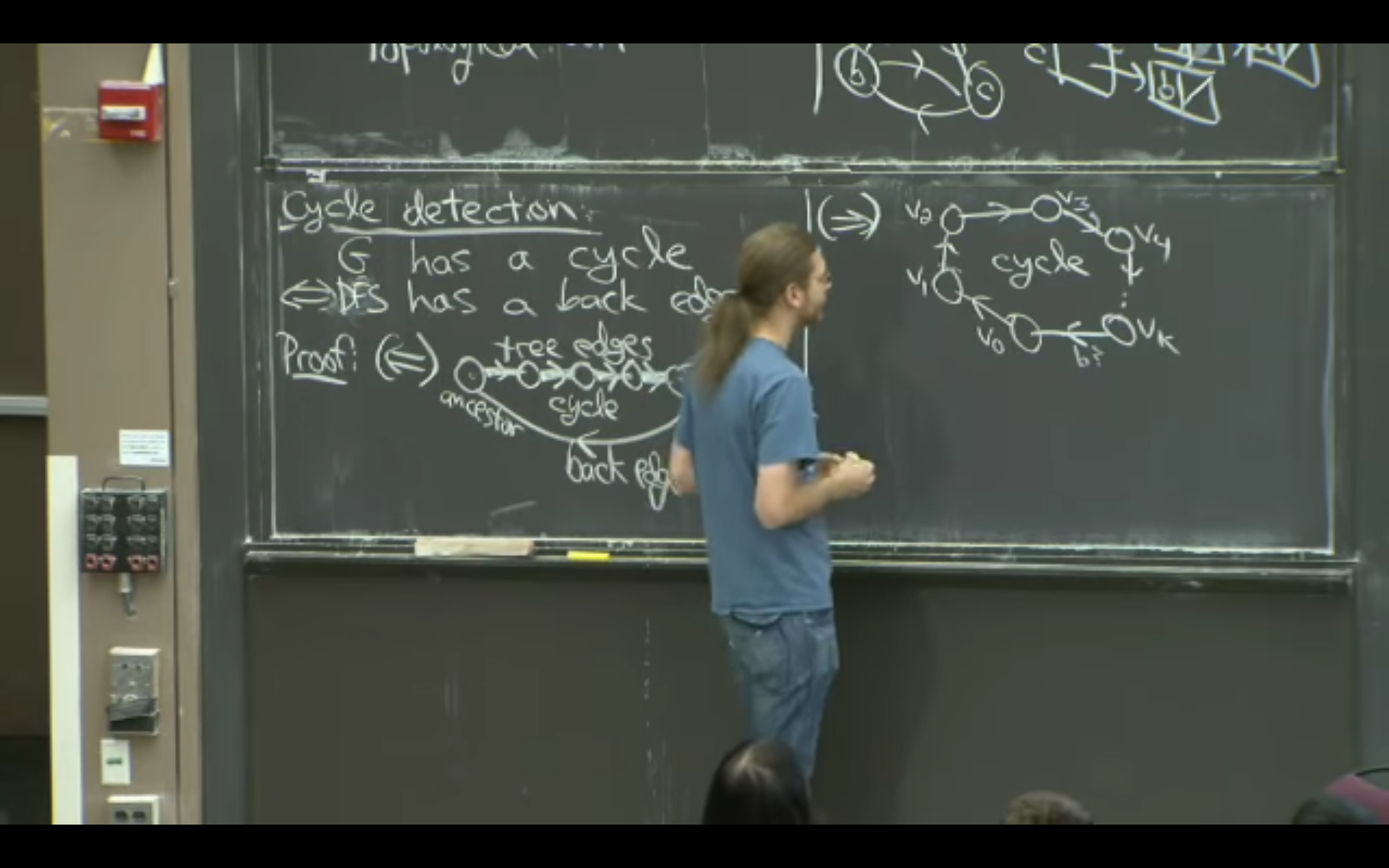

I outlined the different steps necessary for implementing the speech transformer's forward pass:

- And I implemented the Attention layer, based on some past work / resources from ARENA:

-

Recurse Center - Batch 3 - Cycle 20250108-20250110 - Extension

Date: 2025-01-10

Category: rc

-

Connect Zora to LLM

-

Create browser extension / native desktop apps for Zora

-

Integrate with Home Assistant

-

TCP/IP Illustrated (with Rust?)

-

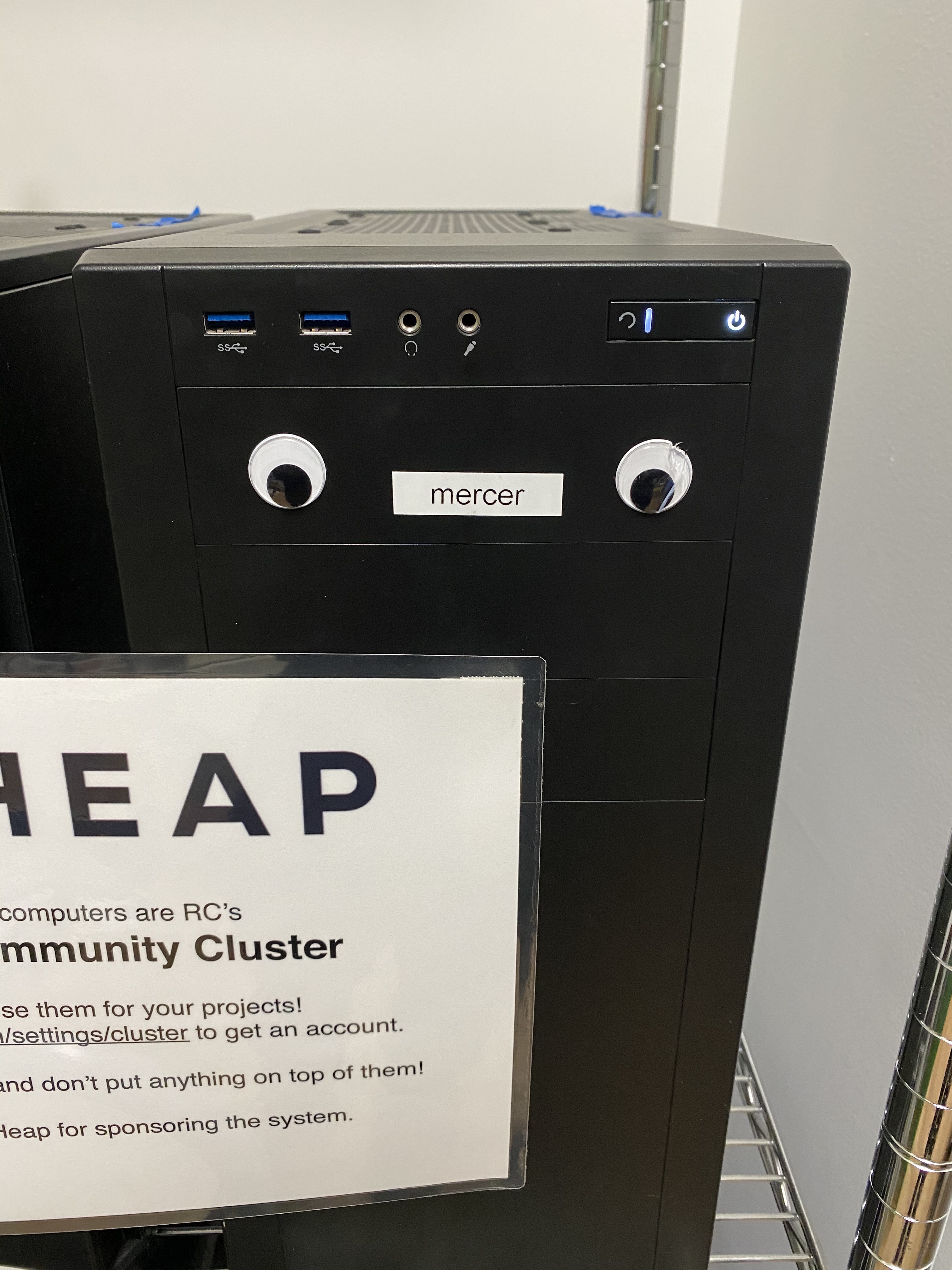

Operating Systems: Three Easy Pieces (on Heap?)

-

Inside the Machine (on Heap?)

-

Gang of Four Design Patterns (for Zora?)

-

SICP

-

Python Elements of Programming Interview (for software engineering/computer science?)

-

Learning Clojure (for fun / stretching my brain / doing something completely orthogonal to everything else I'm working on)

-

Recurse Center - Batch 2 - Cycle 20241211-20241213 - Conclusion

Date: 2024-12-13

Category: rc

-

Recurse Center - Batch 2 - Cycle 20241207-20241209 - Conversation

Date: 2024-12-09

Category: rc

-

Recurse Center - Batch 2 - Cycle 20241203-20241205 - Dimension

Date: 2024-12-05

Category: rc

-

Recurse Center - Batch 2 - Cycle 20241129-20241201 - Extension

Date: 2024-12-01

Category: rc

- Extend my RC batch an additional six weeks

- Work on Audrey and feature visualizaiton examples for a presentation at RC at the end of my batch

- Continue working on Heap

- Continue chipping away at speech transformer and mech interp, knowning that I'll get to more of it during my (hopefully approved) batch extension

-

Recurse Center - Batch 2 - Cycle 20241125-20241127 - Transportation

Date: 2024-11-27

Category: rc

-

Recurse Center - Batch 2 - Cycle 20241117-20241119 - Gestation

Date: 2024-11-19

Category: rc

-

Recurse Center - Batch 2 - Cycle 20241113-20241115 - Continuation

Date: 2024-11-15

Category: rc

-

Recurse Center - Batch 2 - Cycle 20241109-20241111 - Vacation!

Date: 2024-11-11

Category: rc

-

Recurse Center - Batch 2 - Cycle 20241105-20241107 - Reflection

Date: 2024-11-07

Category: rc

-

Today I worked on organizing the first Heap Computer Club meeting.

-

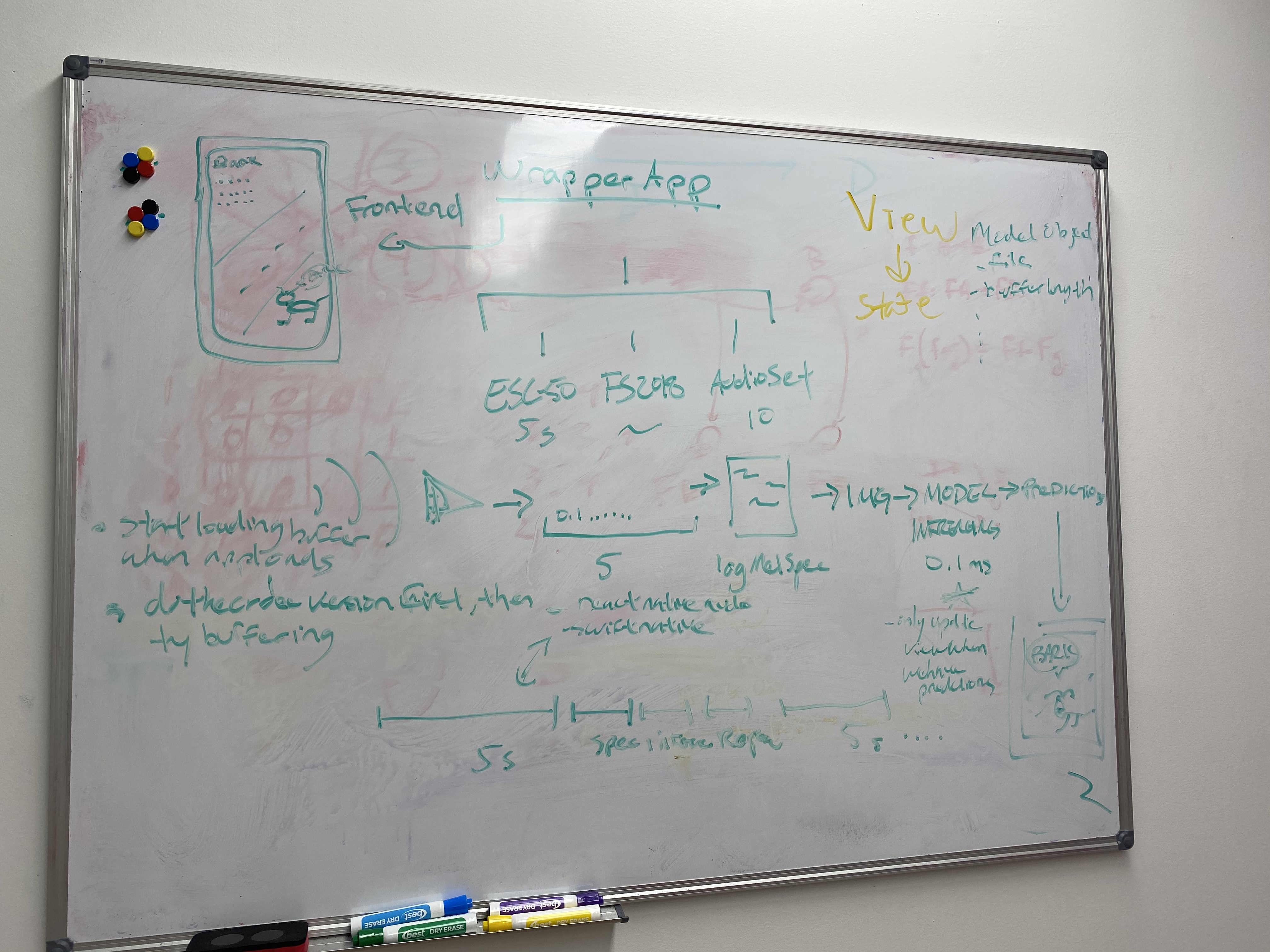

Afterawrd I shared my demo video of Audrey to Recursers, and I've been getting some great responses. Here's the video below:

- Finally I spent some time thinking about this ASR library that I want to make.

-

I had some more tasks to do with Heap, including cleaning up some unused disk space and figure out our meeting time.

-

I also had a wonderful chat with a faculty member at Recurse, who helped me unpack my thoughts about the self-directives above, as well as helped me articulate outloud my why and decide to make this library.

-

I also did some retraining on Audrey, cleaning up some code and using a larger (generated) dataset.

-

I went to an AI Safety presentation by a fellow Recurser that helped oe appreciate more some of the implications of rapid AI progress and why AI safety is important.

-

I did some research on feature visualization in CNNs

-

I wrote the README for Zora!

-

Recurse Center - Batch 2 - Cycle 20241101-20241103 - Direction

Date: 2024-11-03

Category: rc

-

Recurse Center - Batch 2 - Cycle 20241028-20241030 - Transition

Date: 2024-10-30

Category: rc

-

Recurse Center - Batch 2 - Cycle 20241024-20241026 - Interpretation

Date: 2024-10-26

Category: rc

-

Open Problems in Mechanistic Interpretability: A Whirlwind Tour | Neel Nanda | EAGxVirtual 2023

-

Open Problems in Mechanistic Interpretability: A Whirlwind Tour

-

Concrete Open Problems in Mechanistic Interpretability: Neel Nanda at SERI MATS

-

Record my own voice for digits

-

Clean up my notebook code :)

-

Train a few more times and write up to Weights and Biases

-

Look into how I can introduce ideas of observability into this model

-

Conformer: Convolution-augmented Transformer for Speech Recognition

-

Conformer: Convolution-augmented Transformer for Speech Recognition

-

PyTorch implementation of Conformer: Convolution-augmented Transformer for Speech Recognition

-

Recurse Center - Batch 2 - Cycle 20241020-20241022 - Transformation

Date: 2024-10-22

Category: rc

-

Recurse Center - Batch 2 - Cycle 20241016-20241018 - Optimization, Propagation, Variation, Generation, Discrimination

Date: 2024-10-18

Category: rc

-

This page, A Visual Explanation of Gradient Descent Methods (Momentum, AdaGrad, RMSProp, Adam), gave a lot of lovely visual examples of how these optimizers work and how each of them improves over the previous one.

-

This video on Optimization for Deep Learning (Momentum, RMSprop, AdaGrad, Adam) also looked good as well.

-

How to use Weights and Biases to do a sweep of hyperparamters to search for the most optimal hyperparamters that maximize model accuracy.

- Today I was mostly off-line tending to non-Recurse related things.

-

Concrete Steps to Get Started in Transformer Mechanistic Interpretability

-

Progress measures for grokking via mechanistic interpretability

-

Accompanying website to Progress Measures for Grokking via Mechanistic Interpretability

-

Mechanistic Interpretability - NEEL NANDA (DeepMind) on Machine Learning Street Talk

-

Reading AI's Mind - Mechanistic Interpretability Explained [Anthropic Research]

-

Recurse Center - Batch 2 - Cycle 20241012-20241014 - Normalization

Date: 2024-10-14

Category: rc

-

Start NNFS Chapter 7

-

Finishing up Ch 0 of ARENA next cycle

-

Starting Chapter 1 in two sycles

-

I want to carve out time next cycle to work on simple sound MNIST project:

-

Here are some thoughts:

-

speech digit recognizer (Audrey)

-

generating synthetic sound digit dataset

-

training like mnist or using resnet

-

visualizing dataset

-

visualizing network

-

introducing data augmentation for:

-

musicality

-

repetition

-

stress

-

prosody etc.

-

-

Demo: Making a phone call / sending a text

-

-

-

Look into CUDA

-

Recurse Center - Batch 2 - Cycle 20241008-202410010 - Convolution

Date: 2024-10-10

Category: rc

-

Finish Chapter 0 in ARENA

-

Finish Chapters 7, 8, and 9 in NNFS

-

Update my ml_ai_self_study repo on Github

-

Work on my ASR system on Heap with transformers :)

-

Think about creating a synthetic speech dataset for numbers 0-9...

-

https://github.com/Jakobovski/free-spoken-digit-dataset

-

https://adhishthite.github.io/sound-mnist/

-

-

Recurse Center - Batch 2 - Cycle 20241004-20241006 - Transposition

Date: 2024-10-06

Category: rc

-

Review PyTorch methods (do the 100 NumPy exercises in PyTorch)

-

Update my ml_ai_self_study repo on Github

-

Continue working through the ARENA pre-requisites (last week of pre-requisites!)

-

Finish up to Chapter 6 in NNFS

-

Attempt to create an ASR system on Heap with transformers :)

-

Recurse Center - Batch 2 - Cycle 20240930-20241002 - Submersion

Date: 2024-10-02

Category: rc

-

Load all of the paper's from Ilya's list into Zotero and download locally.

-

Continue working through the ARENA pre-requisites.

-

Start working on Neural Networks from Scratch, reading the book and watching the videos.

-

Work through 100 NumPy exercises.

-

Consider what I now know about matricies and transformations to solve leetcode problems like rotate array and rotate image.

-

Do a bit more syling and implement creature comforts on this blog.

-

Matrices

Date: 2024-09-28

Category: dsa

- Key insight: Find the 0 in our matrix and store the location in a list. Once we've gone through our matrix, go back to our list and set its entire row and column to zero. I went with a naive, space inefficient approach for now, but will keep thinking about how to do this in O(m+n) and eventually constant space...

-

Recurse Center - Batch 2 - Cycle 20240926-20240928 - Attenuation

Date: 2024-09-28

Category: rc

-

I worked on some Leetcode problems, which I haven't touched in...years?

-

I also researched some guides to get back into DS+A studying. Here are some resources for moving forward into the future:

-

Did more DS+A, with new Recurse friend Camille Rullan.

-

Read through at a high level the Week 0: Prerequisites

-

Watch and take notes on 3Blue1Brown - Essence of Linear Algebra videos

-

Work through Changlin's Basic Linear Algerbra exercises.

-

Get my env set up on Heap

-

Stacks

Date: 2024-09-27

Category: dsa

- Key insight: Pop elements from stack 1 over to stack 1, pop the top of stack 2 to get our queue pop behavior, then bring everything from stack 2 back to stack 1.

-

Arrays

Date: 2024-09-26

Category: dsa

- Key insight: Figure out the complement for each value in nums, and then store that complement in a dictionary, wit the index of that value. After, iterate through nums again and see if n is in our complement_index dict (which means we found a complement). If so, return our current index of the complement, plus the index of the original value from our first scan. Make sure that both of these indexes aren't the same value.

-

Strings

Date: 2024-09-26

Category: dsa

- Key insight: When working with palindromes, look for a way to track pairs, and one odd character. For this problem, we leverage the notion that valid palindrome has pairs of characters, and at most one non-pair. We use a set to find pairs and if we have one, add two to our counter. At the end, if we have any more characters in our set, just add 1 to our counter. I wasn't sure how leetcode wanted to handle a string that couldn't make a palindrome, but I guess returning 0 makes sense in this context.

-

Recurse Center - Batch 2 - Cycle 20240922-20240924 - Reunion

Date: 2024-09-24

Category: rc

-

A Cycle of Study, Make, Play

Date: 2024-07-31

Category: process

-

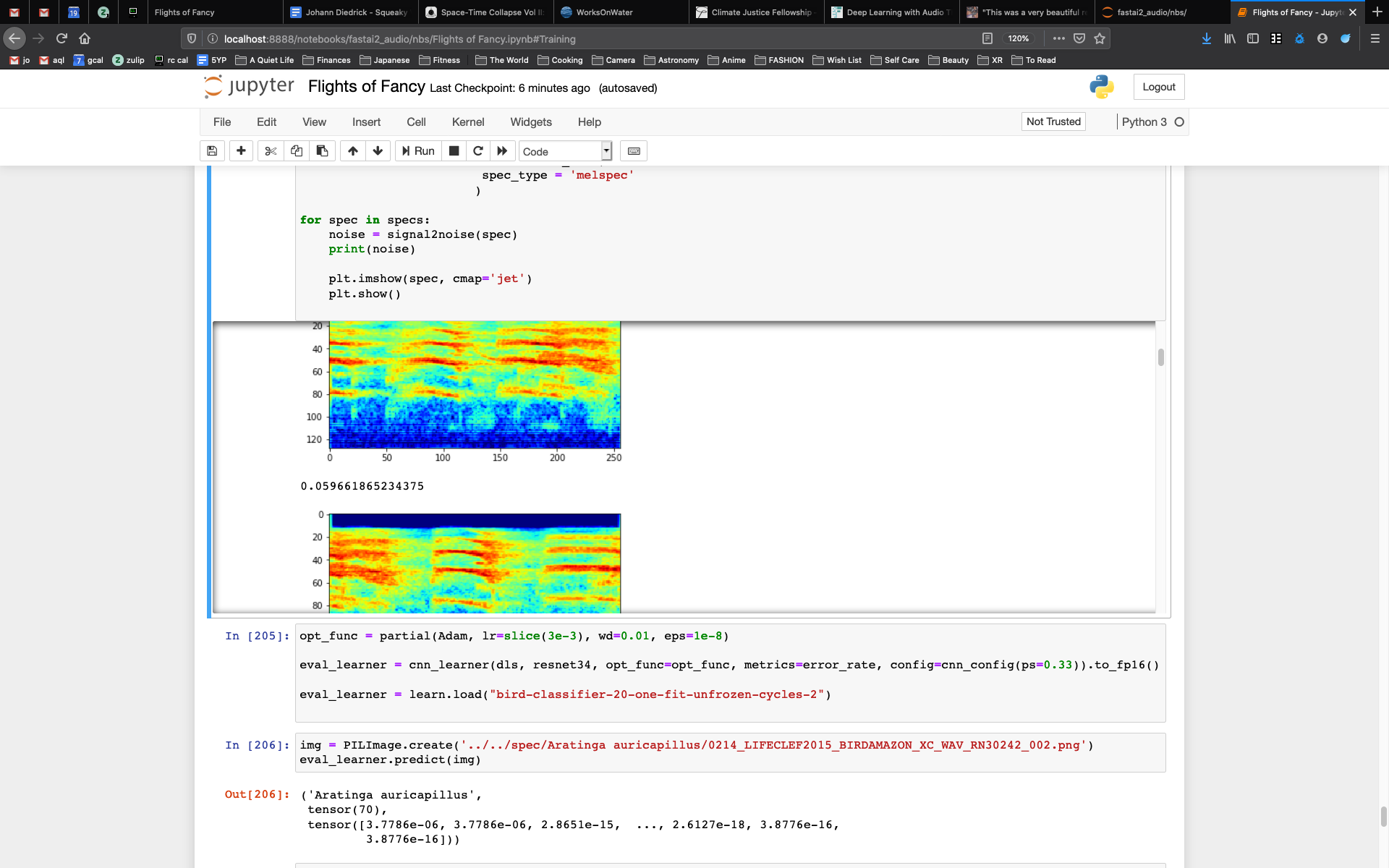

Flights of Fancy

Date: 2020-05-26

Category: research

-

Recurse Center - Batch 1 - Week 12 - Offset

Date: 2020-03-27

Category: rc

-

Recurse Center - Batch 1 - Week 11 - Buffer

Date: 2020-03-20

Category: rc

-

Recurse Center - Batch 1 - Week 10 - Parabolic

Date: 2020-03-13

Category: rc

-

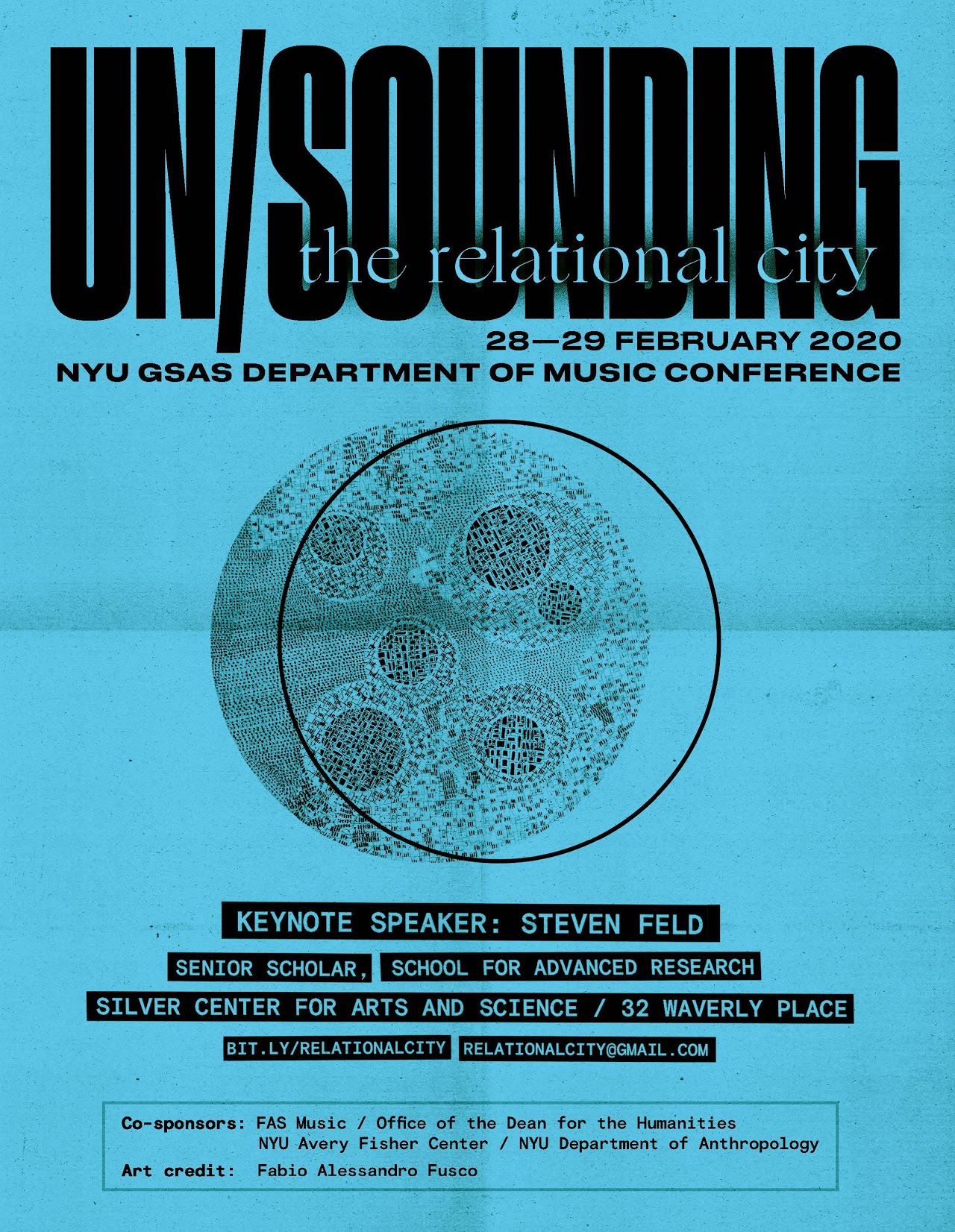

Recurse Center - Batch 1 - Week 9 - Unsounding

Date: 2020-03-06

Category: rc

-

Recurse Center - Batch 1 - Week 8 - Inflection Point

Date: 2020-02-28

Category: rc

-

Recurse Center - Batch 1 - Week 7 - Cycle

Date: 2020-02-21

Category: rc

-

Recurse Center - Batch 1 - Week 6 - Midpoint

Date: 2020-02-14

Category: rc

-

Recurse Center - Batch 1 - Week 5 - A Local Maxima

Date: 2020-02-07

Category: rc

-

Recurse Center - Batch 1 - Week 4 - Chirps

Date: 2020-01-31

Category: rc

-

Recurse Center - Batch 1 - Week 3 - Two Sides of the Same Coin

Date: 2020-01-24

Category: rc

-

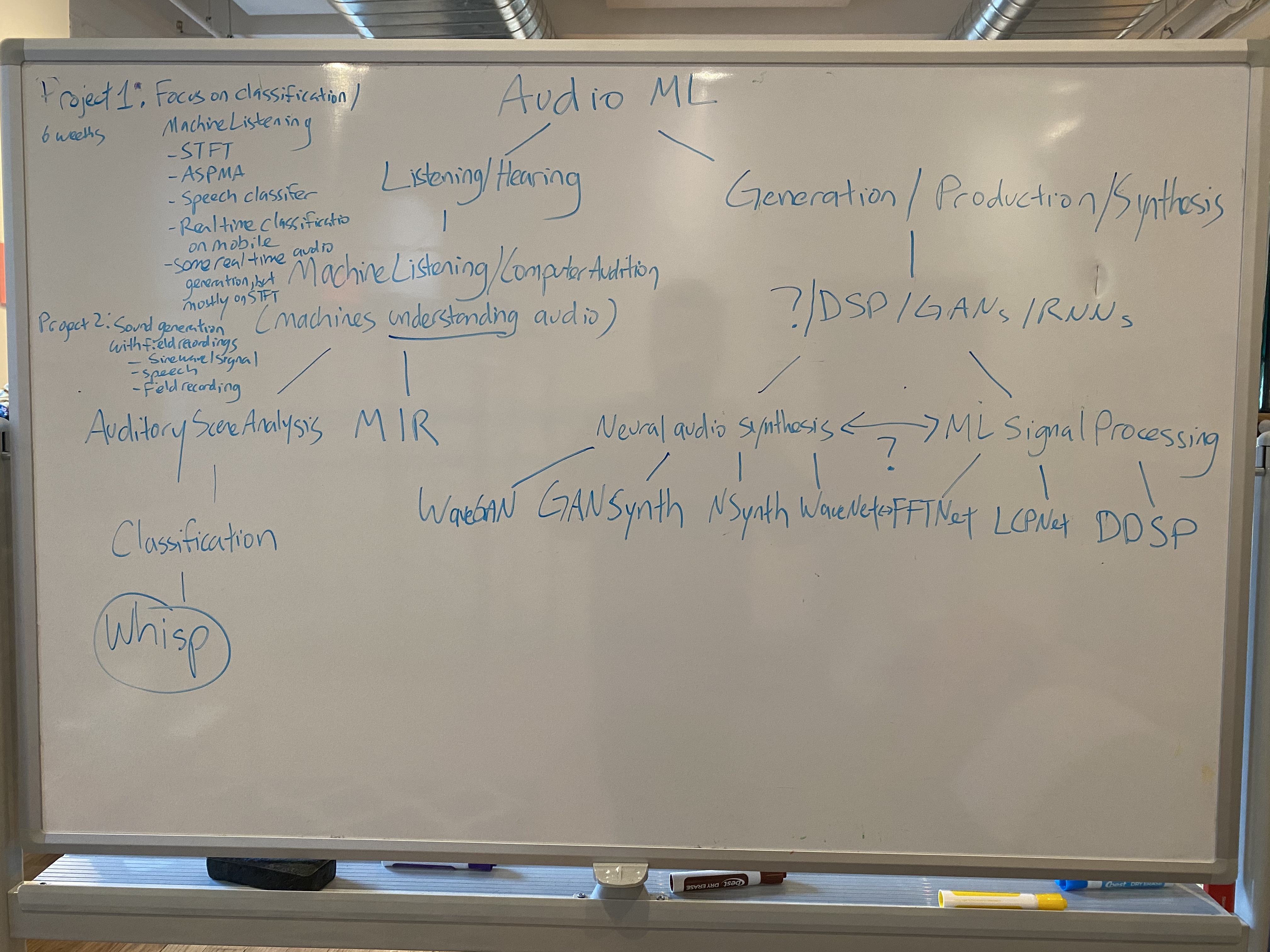

Recurse Center - Batch 1 - Week 2 - Noise to Signal

Date: 2020-01-17

Category: rc

- ML Signal Processing

- Neural audio synthesis

- The MARL community out of NYU (Brian McFee, Juan Pablo, Keunwoo Choi)

- The Music Hackathon community

-

Recurse Center - Batch 1 - Week 1 - Hello, RC!

Date: 2020-01-10

Category: rc

- NCA / Newtown Creek Bird Classifier

- Freesound multilabel classifier

- Shubert's tone generator

- Voice recognition for security

- Sonic generator with GANs

-

Quiet Music, Weak Sounds

Date: 2017-06-01

Category: residencies

Debugging

This cycle was spent getting back from a little break and continue debugging my speech transformer.

Day 1

Unfortunately my copy of the CommonVoice dataset got deleted, so I spent the day re-downloading and running scripts to setup the dataset again. Luckily everything was scripted, so it didn't take too long!

Day 2

Today was spent mostly debugging why I was getting bad output from my model while training. This was mostly spent debugging the CharacterVocabularly class and its encode/decode functions, to understand how tokenization and decoding was funcitoning and if there was some issue causing these weird output patterns in text as it was passed through the model.

With using_special_chars = True, we get output that looks like this:

Predicted text: ooooooooooooooooooooooooooooooooounknown_charhoooooounknown_charunknown_charhhvvvbsvvvbbboooolllllll...

Actual text: SOShe also fought at the battle of bothwell bridgeunknown_charEOSPADPADPADPADPADPADPADPADPADPADPADPA...

With using_special_chars = False, we get this kind of output:

Predicted text: dddd fffyyfffffdddiooooooffooeeeehhiooooooonnnnniioooooooinnniinnnnniiuueeeeeeexyyyfyjuooeeeeedeuu

Actual text: for example colombia chile argentina and venezuela

Something is happening (or not happening) with the predicted text, so I'll have more sluething to do. Excited to see what I end up learning!

Day 3:

Today was spent writing this long and continuing to debug the model. Something I want to figure out quicker debug feedback loop with starting training, getting output, and stopping the process to free of the GPU memory.

Things for next cycle

I'll be spending the next cycles continuing to train and debug my model. My hope is to get a working demo by the end of the month!

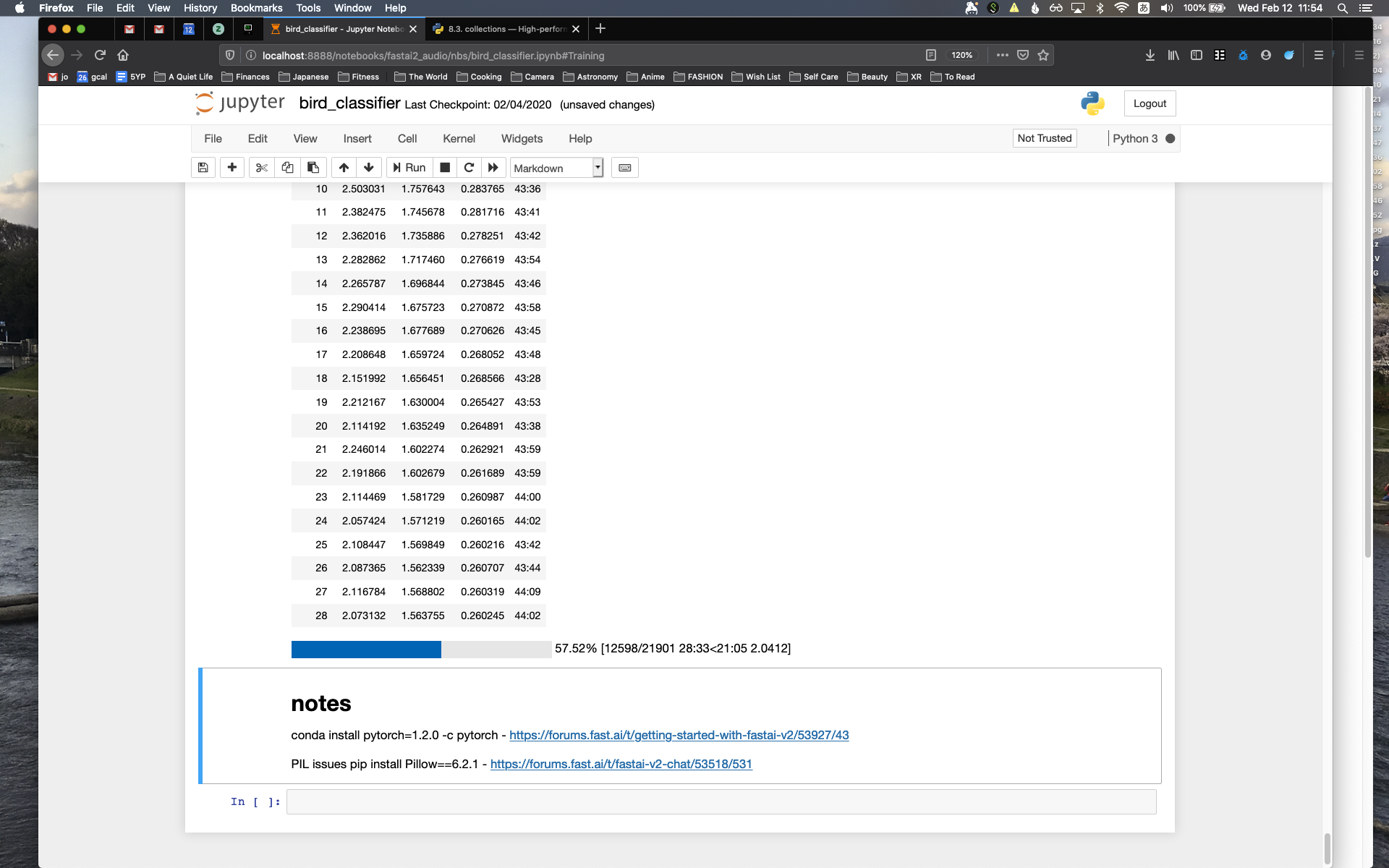

Training

I started training my speech transformer! A cool moment was during some debugging and decoded a tokenized piece of text that I had only seen first as a list of integers first, watching this sentence "emerge" from just numbers.

print("text: ", text)

...

text: tensor([[ 1, 26, 11, 28, 3, 30, 5, 15, 8, 22, 22, 30, 16, 28, 30, 22, 18, 24,

15, 3, 2, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0,

0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0,

0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0,

0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0,

0, 0, 0, 0, 0, 0, 0, 0, 0, 0]]

...

print('string: ', self.cv.decode(text[0].tolist()))

...

string: why bless my soul

I'm dealing with an OutOfMembery error right now, as it seems like there is a bug in my collate_fn() that handles managing frame legnths to 20,000, but they seem to be entering my attention function at a way larger number, causing memory issues (getting attention scores scales quadradically)

audio feature shape: torch.Size([1, 46675, 80])

I'm excited to continue debugging and scale up training until I can get to a full YOLO run, hopefully next cycle!

Day 1

Today I started working on my SpeechTransformerLearner class, which is where training happens (for some reason I feel like calling it learning rather than training even though I know that's the convention).

SpeechTransformerLearner class

The first function I worked on was compute_loss(), which allows us to see whether or not our model is getting any better and predicting words from spectrogram/text pairs used in training/learning.

For speech recogntion, the metric we really care about is Word Rate Error, which is measured by comparing a speech recording and its transcript against what the model predicts the transcript is, only using the same speech recording.

WER is measured by this formula:

# WER = (Substitutions + Deletions + Insertions) / (Total Words in Ground Truth)

And can be calculated by finding the Levenshtein distance between predicted words and the ground truth transcription.

Day 2

Today I worked on more functions for my learner, like:

And a few other helper functions.

I also paired with another Recurser on some virtual machine / devops-y stuff on Heap, which was loads of fun :)

Day 3:

Today I continued working on getting closer to doing my first training run, starting with writing some more code for checkpointing, logging and tracking metrics like grad norm to see if there gradient clipping is necessary, and if so, what value it should be.

I was able to hook my learn() function up to Weights and Biases which was cool to see my training showing up in the dashboard, getting some experience in some MLOps.

Finally I started to get some training off the ground, but encounted some bugs around how my custom batching is working.

I did get to do some fun debugging and fiddled around with decoding some tokenized strings, which was gratifying to see!

This was the first text I got to see come out of decode():

string: dance of fire is a small trilogy

I'm really excited to get this working and scale up testing as much as we can.

Things for next cycle

Next cycle I'm going to continue training, and hopefuly get that working on Ray.

Processing

This was a pretty good cycle with a lot of heads-down work on text and audio preprocessing of the CommonVoice dataset. I also got to pair a bit on some new infrastructure setup on Heap.

Day 1

Today I worked on audio and text dataset pre-processing pipeline for my speech transformer. According to the paper, they used the "Wall Street Journal (WSJ) dataset, training on si284, validating on dev93 and evaluating on eval92 set."

It's not specified in the paper which specific WSJ speech corpus they used, as the Linguistic Data Consotrum offers two: WSJ0 and WSJ1.

Either way, each dataset costs $1,500 and $2,500 respectively for non-members, which is....a bit outside of my budget at the moment :)

So instead, I'm using Mozilla's CommonVoice dataset, which is completely free, open-source, and publicly available speech datasets that is comprised of community-donated speech samples.

I spent a good amount of time exploring the dataset, which you can follow along here:

I also worked on some classes for pre-processing this dataset for training with my speech transformer with a new speech transformer dataset file:

From some of the data analysis, we were able to determine that filtering out speech recordings with sentences > 100 characters would still allow us to use more than 95% of the dataset, which is good for keeping our sequence length tight for efficient training.

self.tsv_items = self.tsv_items[ self.tsv_items['sentence'].str.len() <= self.cfg.max_seq_length ]

I also learned how to use the apply() function in pandas to do efficiently clean up elements in our dataset, like renaming file extensions and cleaning up sentences.

For example, we can write functions like these:

# replace .mp3 with .wav

def replace_mp3_with_wav(path):

return path.replace("mp3", "wav")

# clean sentences

def clean_sentences(s):

return s.split('\t')[0].strip().lower()

And "apply" them like this!:

self.tsv_items['path'] = self.tsv_items['path'].apply(replace_mp3_with_wav)

self.tsv_items['sentence'] = self.tsv_items['sentence'].apply(clean_sentences)

One of the more interesting things I came across while working on this Dataset class was handling normalization. From the paper, they write:

"The input acoustic features were 80-dimensional filterbanks extracted with a hop size of 10ms and a window size of 25ms, extended with temporal first and second order differences and per-speaker mean subtraction and variance normalization."

I was curious to understand more about how this normalization worked and why it was necessary. Asking Claude, I got some answers:

Prompt:

Can you help me with normalization? Still don't provide any code.

Response:

For normalization, we have a few options based on the paper's specification of "per-speaker mean subtraction and variance normalization":

Simple Approach (per utterance):

- Calculate mean across time and frequency for each utterance

- Subtract mean

- Calculate variance

- Normalize by standard deviation

Pros: Simple, no speaker tracking needed

Cons: Not exactly what paper describes

Per-Speaker Approach (matches paper):

- Track speaker IDs from CommonVoice

- Calculate stats across all utterances from same speaker

- Store/cache statistics

- Apply during getitem

Pros: Matches paper exactly

Cons: More complex, needs speaker management

Global Statistics (alternative):

- Calculate stats across entire dataset

- Store in dataset class

- Apply same normalization to all samples

Pros: Simple, consistent

Cons: Loses speaker-specific characteristics

For best results, we should implement the per-speaker approach. This would need:

1. Speaker ID tracking in init

2. Statistics calculation/storage

Lookup during normalization

Would you like me to explain how to implement any of these approaches in detail?

Remember: The paper used per-speaker normalization because different speakers have different acoustic characteristics (pitch, volume, speaking rate, etc.)

I figured out where speaker ID was stored in the dataset (as client_id), but still wanted to understand a bit more about what this normalization would actually accomplish for training purposes. In particular, because of my research on bias in speech recognition systems, I was really curious to know how normalization affected the how the model would handle (or not handle) different acoustic characteristics:

Prompt:

And why do we need to do normalization?

Response:

Normalization is important for several reasons:

- Speaker Variation:

- Different speakers have different voice characteristics

- Some speak louder/softer

- Different pitch ranges

- Different speaking rates

- Different accents/pronunciations

- Model Training Benefits:

- Makes training more stable

- Helps model focus on important features

- Reduces impact of speaker-specific variations

- Makes features more consistent across speakers

- Technical Reasons:

- Brings features to similar scale

- Prevents very large/small values

- Helps with gradient flow

- Makes optimization easier

- Paper's Approach:

- They use per-speaker normalization

- Helps model learn speaker-independent patterns

- Makes model more robust to different speakers

Think of it like this:

- Without normalization: Model has to learn to handle raw differences between speakers

- With normalization: Model can focus on the actual speech patterns rather than speaker-specific characteristics

Would you like me to:

- Show what the features look like before/after normalization?

- Explain different normalization strategies?

- Or continue with implementation?

I ended up going with a more simple approach to normalization, which normalizes on a per-utterance basis.

I'll probably go back and and re-evaluate that approach at a later date to see how it affects model performance. All said though, I was really surprised to learn how normalization works to average out speaker-specific characteristics in order to make training more efficient and effective, which is something that comes up in Mills and Li's research on speech recognition in Vocal Features. Could this be one reason why ASR systems exhibit bias?

Finally, I worked on setting up custom batching with a custom collate function that follows the paper's approach to processing the data for the model:

"In the training stage, the samples were batched together by approximate feature sequence length and each training batch contained 20000-frames features."

More on training next cycle!

Day 2

Today I paired with fellow Recurser on setting up and playing around with Ray, an orchestration infrastructure tool that handles the queuing and running of jobs across GPU machines (among other things). I got to learn a bit more about Kubernetes, SSH tunneling, port forwarding, and got to see a job get initialized on Ray (but didn't run due to some disk space issues on Heap :/ )

In debugging the disk space issue, I learned about ncdu as a prettier way to see and analyze disk space usage.

Day 3:

Today was mostly spent reviewing notes and writing this log.

Things for next cycle

Next cycle I should be training my speech transformer! I'm hoping I can do more stuff with Ray as well (and maybe do my training through its interface?)

Decoding

This cycle I finished up coding the speech transformer from end-to-end :)

The code for the speech transformer looks something like this now:

My encoder looks like this:

class Encoder(nn.Module):

"""Encoder

Input: [batch time_steps freq_bins]

Output: [batch seq_length d_model]

This class processes a batch of spectrograms by:

- Expanding the input tensor by one dimension for channel

- Passing our tensor through a Conv2d block (with ReLU)

- Passing our tensor through another Conv2d block (with ReLU)

- Reshaping out tensor so it has three dimensions instead of four

- Passing through a linear layer so we get an output shape of d_model for the last dimension

- Passing our tensor through a posiitonal encoder

- Passing out query_input through n encoder blocks

- Passing our tensor through a layer norm

- Outputting our encoded output to be used by the decoder (for cross attention)

"""

def __init__(self, cfg: Config):

super().__init__()

self.cfg = cfg

# encoder components

self.repeat = Repeat(self.cfg)

self.conv2d_block_one = Conv2DBlock(self.cfg, self.cfg.n_channels, self.cfg.n_out_channels, self.cfg.conv2d_kernel_size, self.cfg.conv2d_stride, self.cfg.conv2d_padding)

self.conv2d_block_two = Conv2DBlock(self.cfg, self.cfg.n_out_channels, self.cfg.n_out_channels, self.cfg.conv2d_kernel_size, self.cfg.conv2d_stride, self.cfg.conv2d_padding)

self.reshape = Reshape(self.cfg, "b c ts fb -> b ts (c fb)")

self.linear = Linear(self.cfg)

self.positional_encoder = PositionalEncoder(self.cfg)

self.encoder_blocks = nn.Sequential(

*[EncoderBlock() for _ in range(self.cfg.n_encoder_layers)]

)

self.layer_norm = LayerNorm(self.cfg)

# encoder as an nn.Sequential

self.sequential = nn.Sequential(

self.repeat,

self.conv2d_block_one,

self.conv2d_block_two,

self.reshape,

self.linear,

self.positional_encoder,

self.encoder_blocks,

self.layer_norm

)

def forward(self, x: Float[t.Tensor, "batch time_steps freq_bins"]) -> Float[t.Tensor, "batch seq_length d_model"] : # type: ignore

# take in our input spectrograms, encode, and generate encoded outputs to be used by the decoder for cross-attention

assert x.ndim == 3, f"Expected 3 dimensions, got {x.ndim}"

assert x.shape[2] == self.cfg.n_freq_bins

return self.sequential(x)

And my decoder looks like this:

class Decoder(nn.Module):

def __init__(self, cfg):

super().__init__()

self.cfg = cfg

self.character_embedding = CharacterEmbedding(self.cfg)

self.decoder_positional_encoder = PositionalEncoder(self.cfg)

self.decoder_blocks = nn.ModuleList(

[DecoderBlock(self.cfg) for _ in range(self.cfg.n_decoder_layers)]

)

self.layer_norm = LayerNorm(self.cfg)

self.linear = nn.Linear(self.cfg.d_model, self.cfg.vocab_size)

self.soft_max = nn.Softmax(dim=-1) # Apply softmax along the vocabulary dimension

def forward(self, x,

key_input: Optional[Float[t.Tensor, "batch posn d_model"]] = None, # type: ignore

value_input: Optional[Float[t.Tensor, "batch posn d_model"]] = None # type: ignore

):

# take in our input tokens, encode, and generate character embeddings

embeddings = self.character_embedding(x)

encoded_sequence = self.decoder_positional_encoder(embeddings)

assert encoded_sequence.ndim == 3

assert encoded_sequence.shape[1] <= self.cfg.max_seq_length

assert encoded_sequence.shape[2] == self.cfg.d_model

# pass positional encoding into decoder blocks

x = encoded_sequence

for block in self.decoder_blocks:

x = block(x, key_input, value_input)

x = self.layer_norm(x)

x = self.linear(x)

probabilities = self.soft_max(x)

return probabilities

More on the full SpeechTransformer and its classes can be found here:

Day 1

Today I started refactoring the encoder into its own class. Once that was done, I did some refactoring of the decoder as I wrapped up both sides of the speech transformer diagram.

I learned a bit about static type checkers in IDEs.

I developed a deeper understanding of what the signifigance of our sequence dimension, and how this relates to vocab_size and d_model.

In implementing cross-attention in the speech transformer's decoder, we wnat to use the same Attention class to do both self-attention (with just query_input) or cross-attention (with query_input, as well as key_input, and key_input that come from the output in our encoder.

In order to accomplish this, we use a lambda function that lets us pass optional parameters that end up "closing over" and capturing the extra parameters, if used.

If key_input and value_input are empty, we default to useing query_input for calcuating Q, K, and V in our attention function. If we pass in key_input and value_input from our encoder's output, they will be used in their respective functions in the attention function. This way we can support self-attention and cross-attention in the same class/function.

Here's what that looks like in code:

In our decoder:

class DecoderBlock(TransformerBlock):

def __init__(self, cfg):

super().__init__()

self.cfg = cfg

self.layer_norm_one = nn.LayerNorm(self.cfg.d_model)

self.attention_masked = Attention(self.cfg, apply_mask=True)

self.layer_norm_two = nn.LayerNorm(self.cfg.d_model)

self.attention_unmasked = Attention(self.cfg, apply_mask=False)

self.layer_norm_three = nn.LayerNorm(self.cfg.d_model)

self.feed_forward_network = FFN(self.cfg)

def forward(

self,

x: Float[t.Tensor, "batch posn d_model"], # type: ignore

key_input: Optional[Float[t.Tensor, "batch posn d_model"]] = None, # type: ignore

value_input: Optional[Float[t.Tensor, "batch posn d_model"]] = None # type: ignore

) -> Float[t.Tensor, "batch posn d_model"]: # type: ignore

x = self.add_to_residual_stream(x, self.layer_norm_one, self.attention_masked)

x = self.add_to_residual_stream(x, self.layer_norm_two, lambda norm_x: self.attention_unmasked(norm_x, key_input, value_input)) # this layer needs to use encoder outputs as its inputs for keys and values, and use queries from previous sub-block outputs

x = self.add_to_residual_stream(x, self.layer_norm_three, self.feed_forward_network)

return x

And in our Attention class:

def forward(

self,

query_input: Float[t.Tensor, "batch posn d_model"], # type: ignore

key_input: Optional[Float[t.Tensor, "batch posn d_model"]] = None, # type: ignore

value_input: Optional[Float[t.Tensor, "batch posn d_model"]] = None # type: ignore

) -> Float[t.Tensor, "batch posn d_model"]: # type: ignore

# linear map

Q = einops.einsum(query_input,

self.W_Q,

"b s e, n e h -> b s n h") + self.b_Q

K = einops.einsum(query_input if key_input is None else key_input,

self.W_K,

"b s e, n e h -> b s n h") + self.b_K

V = einops.einsum(query_input if value_input is None else value_input,

self.W_V,

"b s e, n e h -> b s n h") + self.b_V

# ...

And as I wrapped up the decoder, I got a better understanding of how our output probabilities ends up as a vocabulary size, predicting what character should come next. This was a bit a-ha moment for me!

Day 2

Today I reactored and tested the full encoder-decoder speech transformer, as well as some of the older tests where I modified the implementation since yesterday.

def test_speech_transformer(model, cfg):

cv = CharacterVocabulary(cfg)

batches = 2

time_steps = 100

freq_bins = model.cfg.n_freq_bins

text_input = "listening is a practice of freedom"

encoded_text_input = cv.encode(text_input)

assert len(encoded_text_input) == model.cfg.max_seq_length

encoded_text_tensor = t.tensor(encoded_text_input, dtype=t.long)

encoded_text_tensor = einops.repeat(encoded_text_tensor, "seq_length -> b seq_length", b=batches).to(cfg.device)

spectrograms = t.randn(batches, time_steps, freq_bins).to(cfg.device)

output = model(spectrograms, encoded_text_tensor)

assert output.shape == (batches, model.cfg.max_seq_length, model.cfg.vocab_size)

Day 3:

Today was mostly spent writing this post and re-factoring some code in my tests, using pytest fixtures.

Things for next cycle

Next cycle will be training, so I'm looking forward to setting up a data processing pipeline and doing my first training run with this model! I also have a few things that are less of a priority, like move some of the asserts in the classes to tests.

Encoding

This was a really productive past few days where I made a lot of progress on this speech transformer.

A lot of what I'm trying to do is incredibly new for me (building an automated speech recognition system from scratch), and without the benefit of a mentor to help introduce me to a lot of new concepts that I'm not familiar with, I've been relying on using Claude in Cursor to help introduce me to these concepts at a high-level, so I can program it myself. I've explictly asked it to never provide any code, and instead help me build intuition and guide me through implementation through questions and explaination. A sample prompt might look like:

I'm implementing the decoder portion of a Speech Transformer following the paper "Speech-Transformer: A No-Recurrence Sequence-to-Sequence Model for Speech Recognition". I have already implemented: 1. Complete encoder with: - Initial feature extraction (Conv2D layers) - Linear projection and positional encoding - Multi-head attention (with optional masking) - Feed-forward networks - Layer normalization and residual connections Now I need to implement the decoder. According to the paper, each decoder block should have: 1. Masked multi-head self-attention 2. Cross-attention with encoder outputs 3. Position-wise feed-forward networks 4. Layer normalization and residual connections Additionally, the decoder needs: 1. Character embedding layer at the input 2. Final linear + softmax for output probabilities Please help guide me through implementing these components. Don't provide code directly - instead help me build intuition and guide me through the implementation with questions and explanations.

Or I might write a prompt with the image of the model attached and some text from the paper itself:

Hi! Please read the attached prompt and start helping me along. Today I want to finish the implemetation of the decoder. Please don't provide any code, just help me help me build intuition and guide me through implementation through questions and explaination. I've also attached an image of the architecture. Here is the description of the decoder from the paper: "The decoder is shown in the right half of Figure 2. We firstly employ a learned character-level embedding to convert the character sequence to the output encoding of dimension dmodel, which is added with the positional encoding. Then, the sum of them are inputted to a stack of Nd decoder-blocks to obtain the final decoder outputs. Differently from the encoder-block, each decoder-block has three sub-blocks: The first is a masked multi-head attention which has the same queries, keys and values. And the masking is utilized to ensure the predictions for position j can depend only on the known outputs at positions less than j. The second is a multi-head attention whose keys and values come from the encoder outputs and queries come from the previous sub-block outputs. The third is also positionwise feed-forward networks. Like the encoder, layer normalization and residual connection are also performed to each sub-block of the decoder. Finally, the outputs of decoder are transformed to the probabilities of output classes by a linear projection and a subsequent softmax function."

I've found this way of working with LLMs like Claude extremely helpful, and it was something I got introduced to while working through ARENA during my last batch. In one of the notebooks, they encourage us to use LLMs to help understand code or concepts we aren't familiar with or are seeing for the first time, and they explain this distinction between "playing in easy mode" vs "playing in hard mode":

From ARENA, they write:

We'll be discussing more advanced ways to use GPT 3 and 4 as coding partners / research assistants in the coming weeks, but for now we'll look at a simple example: using GPT to understand code. You're recommended to read the recent LessWrong post by Siddharth Hiregowdara in which he explains his process. This works best on GPT-4, but I've found GPT-3.5 works equally well for reasonably straightforward problems (see the section below).

This is where I got introduced to the concept of "playing in easy mode" vs "playing in hard mode":

Is using GPT in this way cheating? It can be, if your first instinct is to jump to GPT rather than trying to understand the code yourself. But it's important here to bring up the distinction of playing in easy mode vs playing in hard mode. There are situations where it's valuable for you to think about a problem for a while before moving forward because that deliberation will directly lead to you becoming a better researcher or engineer (e.g. when you're thinking of a hypothesis for how a circuit works while doing mechanistic interpretability on a transformer, or you're pondering which datastructure best fits your use case while implementing some RL algorithm). But there are also situations (like this one) where you'll get more value from speedrunning towards an understanding of certain code or concepts, and apply your understanding in subsequent exercises. It's important to find a balance!

It's been nice finding that balance by learning something new from Claude, and then trying to implement that concept on my own. Then when something like that comes up again, I'm feeling myself getting better at writing a lot of things myself from scratch that I've never seen before, as my intuition continues to grow and new concepts begin to stick :)

Day 1

Today was mostly spent working on the encoder side of the abode diagram. That meant implementing a lot of classes, and writing tests for them. At the end of the day, our SpeechTransformer class ended up looking like this:

class SpeechTransformer(nn.Module):

"""Speech Transformer model that converts speech spectrograms to text.

The model follows the architecture from "Speech-Transformer: A No-Recurrence

Sequence-to-Sequence Model for Speech Recognition" paper.

Architecture Overview:

1. Two Conv2d layers with stride 2 reduce time and frequency dimensions by 4x

2. Linear projection to d_model dimension

3. Positional encoding

4. Transformer encoder blocks

5. Layer norm

Currently encoder-only!

Input shape: [batch_size, time_steps, freq_bins]

Output shape: [batch_size, reduced_time_steps, d_model]

"""

def __init__(self):

super().__init__()

self.cfg = Config()

# encoder components

self.repeat = Repeat(self.cfg)

self.conv2d_block_one = Conv2DBlock(self.cfg, self.cfg.n_channels, self.cfg.n_out_channels, self.cfg.conv2d_kernel_size, self.cfg.conv2d_stride, self.cfg.conv2d_padding)

self.conv2d_block_two = Conv2DBlock(self.cfg, self.cfg.n_out_channels, self.cfg.n_out_channels, self.cfg.conv2d_kernel_size, self.cfg.conv2d_stride, self.cfg.conv2d_padding)

self.reshape = Reshape(self.cfg, "b c ts fb -> b ts (c fb)")

self.linear = Linear(self.cfg)

self.encoder_positional_encoder = PositionalEncoder(self.cfg)

self.encoder_blocks = nn.Sequential(

*[EncoderBlock() for _ in range(self.cfg.n_encoder_layers)]

)

self.layer_norm = LayerNorm(self.cfg)

# encoder sequential

self.encoder = nn.Sequential(

self.repeat,

self.conv2d_block_one,

self.conv2d_block_two,

self.reshape,

self.linear,

self.encoder_positional_encoder,

self.encoder_blocks,

self.layer_norm

)

# decoder components

self.character_embedding = CharacterEmbedding(self.cfg)

self.decoder_positional_encoder = PositionalEncoder(self.cfg)

PyTorch has this really nice construct called nn.Sequential, which let's you stack a bunch of nn.Modules together, and you can simply call:

def forward(self, x: Float[t.Tensor, "batch time_steps freq_bins"]) -> Float[t.Tensor, "batch reduced_time d_model"]: # type: ignore

return self.encoder(x)

And nn.Sequential will call all of the forward methods for those objects! That way you can pass your input just once into your sequential, and your data will flow through all of those layers, sequentially. :)

Day 2

Today I started working on the decoder side of the model, and got as far as writing the classes for encoding/decoding input text (CharacterVocabulary) and creating our embeddings (CharacterEmbeddings). Here is what those two classes look like.

Day 3:

Today I had to handle some non-RC things.

Things for next cycle

My hope is to finish the decoder side of the speech transformer model, and do some more documentation / reviewing what I've been learning. It's been a lot so far! Hopefully by the end of the next cycle we can start training this speech transformer with open datasets like CommonVoice.

Stagnation

I had a hard time programming this cycle. I think I've been a bit intimated diving back into doing hard, scary things and I've been procrastinating a bit during the day. I've found a lot more success coding at night, but I want to do better at coding during the day / pairing with others.

I did manage to do some exercises in Vim, and write a little more code towards my speech transformer.

Day 1

Day 2

Day 3:

class SpeechTransformer(nn.Module):

def __init__(self):

super().__init__()

self.cfg = Config()

def forward(self, x):

#TODO modify this code to return the right output shape once we determine what that is

# Conv2d + ReLu - initial feature extraction

# Conv2d + ReLu - more feature extraction

# Linear - project to d_model dimension (this is where embedding happens!)

# Reshape

x = einops.reshape(x, "b ts d_model-> b (ts fb) feature_dim", feature_dim=self.cfg.d_model) # b ts fb

# Input Encoding (Positional Encoding) - add positional information to embedded sequence

# Attention Blocks - process the sequence

# Layer Norm

# Multi-Head Attention

# Layer Norm

# MLP

# Layer Norm

return x

def forward(

self,

normalized_resid_pre: Float[Tensor, "batch posn d_model"]

) -> Float[Tensor, "batch posn d_model"]:

# linear map

Q = einops.einsum(normalized_resid_pre,

self.W_Q,

"b s e, n e h -> b s n h") + self.b_Q

K = einops.einsum(normalized_resid_pre,

self.W_K,

"b s e, n e h -> b s n h") + self.b_K

V = einops.einsum(normalized_resid_pre,

self.W_V,

"b s e, n e h -> b s n h") + self.b_V

attn_scores = einops.einsum(Q,

K,

"batch seq_q head_index d_head, batch seq_k head_index d_head -> batch head_index seq_q seq_k"

)

scaled_attn_scores = attn_scores / (self.cfg.d_head ** 0.5)

masked_attn_scores = self.apply_causal_mask(scaled_attn_scores)

A = t.softmax(masked_attn_scores, dim=-1) # attention is all we need!

z = einops.einsum(A, V, "b n sq sk, b sk n h -> b sq n h")

result = einops.einsum(z, self.W_O, "b sq n h, n h e -> b sq e")

return result + self.b_O

def apply_causal_mask(self, attn_scores: Float[Tensor, "batch n_heads query_pos, key_pos"]

) -> Float[Tensor, "batch n_heads query_pos key_pos"]:

return attn_scores.masked_fill_(t.triu(t.ones_like(attn_scores), diagonal = 1) != 0, self.IGNORE)

Things for next cycle

Like last cycle, just continuing my working on implementing the Speech-Transformer paper, and trying to practice learning generously more next cycle.

Extension

I'm back at RC for a six-week half batch, continuing my work on building automatic speech recogniton (ASR) systems from scratch for science and fun :)

During my last 12-week batch, I created Zora, an interpretable machine listening library for voice and speech. I gave a presentation on it during the last week of batch that you can check out here. I demonstrated how you can use this library to build a speech digit recognizer i.e. recognizing someone saying the digits 0-9. My goal for this half batch is to build out a full-spectrum ASR system with my library that should be able to do robust speech-to-text recognition.

Towards those ends I've planning on implementing a transformer-based ASR system based on the Speech-Transformer paper. This cycle was spent mostly getting myself set up, as well as participating in first-week-of-batch activities, meeting all the new Recursers, and catching up with familiar faces.

Day 1

I spent most of my day reading the Speech Transformer paper (a more... :cough:...accessible version of the paper can be found here).

Day 2

I started setting up my environment for development, which meant setting up some scaffolding for testing with pytest. I also learned that conda has this weird way of listing dependencies in your requirements.txt file by appending an @ symbol and a local path to that library, which breaks running pip install -r requirements.txt. The way to get around this was by using pip list --format=freeze to generate requirements in the right format i.e. pip list --format=freeze > requirements.txt

I starting writing code to implement the self-attention mechanism, and afterward I had a nice coffee chat with a Recurser before attending first-week presentations

Day 3:

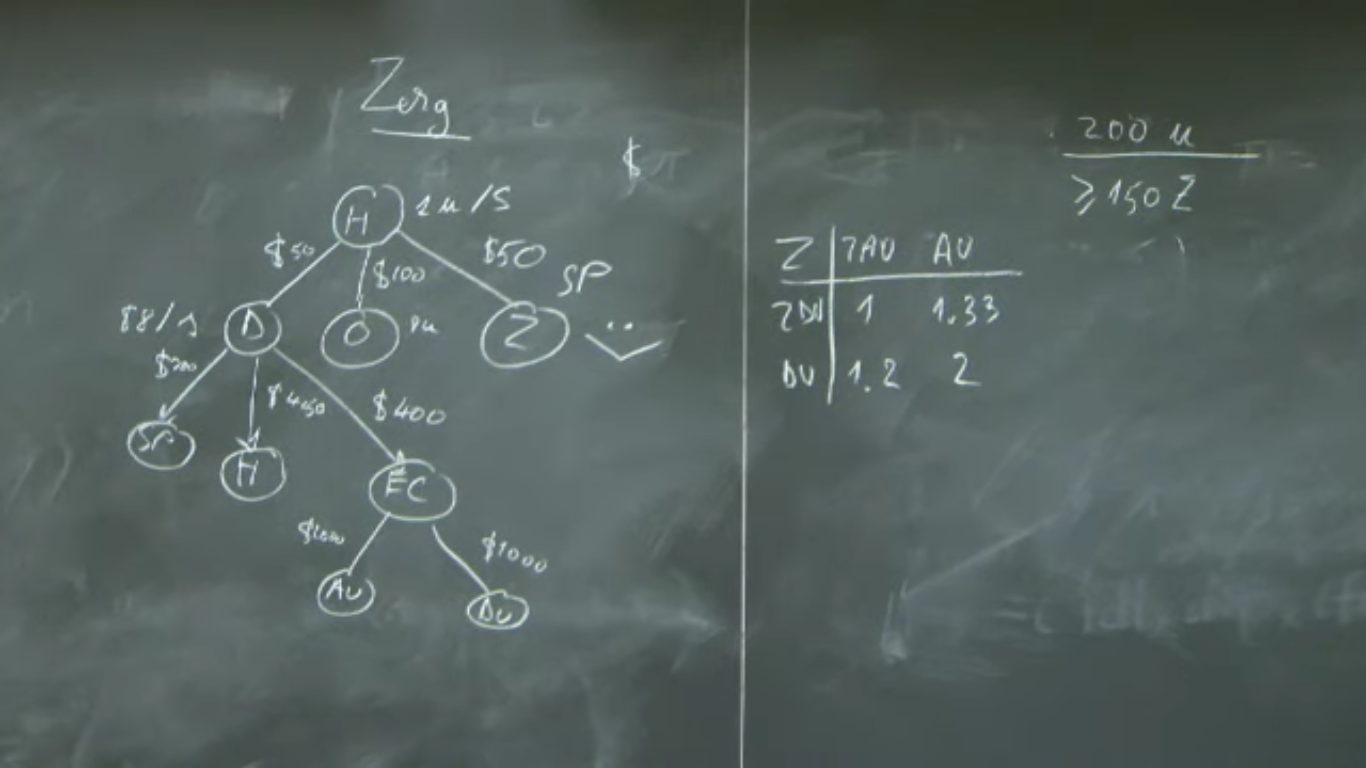

Today was spent attending the "Building Your Volitional Muscles" workshop. I actually feel really good about what I want to focus on, why I want to focus on it, and what that means for deprioritizing other things that I might be curious about doing but might not be as important to me given all the other things I want to do. I know that my deep passion lies with the triangle of audio/sound/music, software engineering, and ML/deep learning. I ended up organizing everything into these buckets:

ASR

Week 1 - Transformer training

Week 2 - Finetuning

Week 3 - Mechanistic Interpretability

Week 4 - Metrics and Evals

Week 5 - Data upload / model weights

Week 6 - Present!

Stretch goals:

Filling gaps in Software Engineering knowledge

Picking up one of these books and doing something with them (either playing on Heap and/or developing some MLOps skills):

I rounded out the day with a meeting for the Heap Computer Club, which went really well. We made a very short presentation about the cluster, how to use it, and how to get more involved, which can be found here.

Things for next cycle

Along with just continuing my working on implementing the Speech-Transformer paper, I want to see if I can slide in some of my other side quests here and there.

I also want to get better at vim, so I'm going to try to watch one video from Primagen's Vim As Your Editor series a da.

Conclusion

This is the last cycle for my current batch at Recurse! Even though I'm technically extending my batch by six weeks, I feel the significance of this 12-week batch ending.

Day 1

Today I worked on my presentation on Zora! As part of that, I ended up generating a lot of cool gifs like the one below:

Day 2

Today I presented on Zora, my interpretable machine listening library for voice and speech.

Here is a link to my presentation: Zora: An Interpretable Machine Listening Library for Voice and Speech

Day 3:

Last day of batch! I basically just worked on this log post, along with spending time with my fellow RCers before we all go off on our post-batch journeies ;_;

Things for next cycle

That's it for now. Looking forward to my six week batch extension :)

Conversation

This cycle was mostly occupied with an all-day AI Safety Workshop that I atteneded at RC.

Day 1

I attened an AI Safety Workshop at Recurse Center.

This one slide really stuck with me

Day 2

I did some non-RC things on this day.

Day 3:

I worked on my Zora presentation, for next Thursday (last cycle of this batch)

Things for next cycle

Next cycle is my last one for my second batch! ;_;

Dimension

This cycle was mainly working on Zora, finishing up some data pre-processing on Heap (as well as fixing some Ansible code!).

Day 1

I worked on a bug in the Ansible code that manages the Heap cluster, debugging a nested for loop that can be improved with some proper data structures.

I finished CommonVoice data preprocessing. The directory of wav files, produced from the mp3 files, totals 390GB :) Compare that to the zipped version of the download, which was only 84GB.

(base) jo@broome:~$ du -sh /data/jo/commonvoice/*

84G /data/jo/commonvoice/commonvoice.tar.gz

483G /data/jo/commonvoice/cv-corpus-19.0-2024-09-13

(base) jo@broome:~$ du -sh /data/jo/commonvoice/cv-corpus-19.0-2024-09-13/en/*

79M /data/jo/commonvoice/cv-corpus-19.0-2024-09-13/en/clip_durations.tsv

92G /data/jo/commonvoice/cv-corpus-19.0-2024-09-13/en/clips

390G /data/jo/commonvoice/cv-corpus-19.0-2024-09-13/en/clips_wav

4.7M /data/jo/commonvoice/cv-corpus-19.0-2024-09-13/en/dev.tsv

92M /data/jo/commonvoice/cv-corpus-19.0-2024-09-13/en/invalidated.tsv

104M /data/jo/commonvoice/cv-corpus-19.0-2024-09-13/en/other.tsv

1.3M /data/jo/commonvoice/cv-corpus-19.0-2024-09-13/en/reported.tsv

4.7M /data/jo/commonvoice/cv-corpus-19.0-2024-09-13/en/test.tsv

352M /data/jo/commonvoice/cv-corpus-19.0-2024-09-13/en/train.tsv

456K /data/jo/commonvoice/cv-corpus-19.0-2024-09-13/en/unvalidated_sentences.tsv

223M /data/jo/commonvoice/cv-corpus-19.0-2024-09-13/en/validated_sentences.tsv

555M /data/jo/commonvoice/cv-corpus-19.0-2024-09-13/en/validated.tsv

Day 2

I started porting training code to Zora library.

Day 3:

Today was more porting of training code.

Things for next cycle

I'm going to start working on my presentation for Zora, continue working on the library, maintaining Heap, and finding time for some other fun programming :)

Extension

Most of this cycle was spent with family, so I leaned into that more than anything else. I made a bit more progress on working with the Common Voice dataset (more below). More importantly, I think I decided I want to extend my batch at RC for six weeks! I'm going to check in with the RC faculty next cycle and see if that would be possible.

Day 1

I did a silly thing and screwed up my data preprocessing for the Common Voice dataset. The dataset comes as mp3s, so I tried to convert them to wavs with a new sampling rate of 16000. Unfortunately the code I wrote ended up stretching the audio out, so not only did it completely balloon the wav version of the dataset to about 2 terabytes (yikes), it also made all of the audio unusable. So, I rewrote the code, and I'm now reprocessing all of the files again. Lessons learned!

Day 2

Today was mostly spend re-processing the Common Voice data set.

Day 3:

I'm still continuing to re-process the dataset, which is just about halfway done.

It's also December 1st, which means Advent of Code is starting. I've never done it before so I dedided to try it out. My main intentions are for me to practice Python and have a little fun doing this while hopefully stretching myself a bit as a programmer through the process.

Things for next cycle

For the next few cycles that wrap up my current 12 week batch, I want to:

Transportation

This cycle I flew back home to Florida to be with my family for the holidays, so I've been spending most of my time with them. I did manage to get a few things done however, which is exciting.

Day 1

Today was mostly spent working on getting set up to download the Common Voice dataset and preparing myself to work with it. Some code around downloading, extracting, and converting the mp3 files to wav files can be found in this notebook.

Day 2

I worked more on Zora and created some fun classes and functionality, including a listener class that has two main functions, listen and interpret.

import numpy as np

import torch as t

class Listener:

def __init__(self, model_architecture, model_weights, interpreter):

self.model_architecture = model_architecture

self.model_weights = model_weights

self.interpreter = interpreter

def load(self):

# get our device

device = t.device("cuda" if t.cuda.is_available() else "cpu")

print(f"Using device: {device}")

# load the model

self.model_architecture.load_state_dict(t.load(self.model_weights, map_location=device))

# load the interpreter

self.interpreter.load(self.model_architecture)

def listen(self, spec):

# Pass spec into model

outputs = self.model_architecture(spec)

prediction = str(outputs.argmax().item())

print("Model prediction:", prediction)

def interpret(self, spec):

self.interpreter.interpret(spec)

Day 3:

Today I started working on downloading the Common Voice dataset for my transformer-based ASR model. It's going to take.... a couple of days to convert the 2459129 mp3 clips into wav files, so we are now sitting back and waiting for that to happen.

Things for next cycle

A little bit of the same for next cycle: working on Zora, working on ARENA and helping out with Heap.

Gestation

This cycle was a lot of work related to starting my library called Zora. It's an interpretable machine listening library focused on voice and speech. You can learn more about Zora, my values and intentions around the library, and ways to contribute here!

Day 1

Today was focused mostly on non-RC related activities

Day 2

Today I worked on Zora and made some nice progress. I also started on an implementation of a transformer-basesd ASR system based on the Speech-Transformer paper.

Day 3:

Today I tested some new set up on the Heap cluster. Some other Recursers set up a new 10TB HD that can be accessed from other machines, which is a huge boon for the cluster at large.

Afterward I had a nice pairing session with another Recurser around my library. I was prompted to explain how convolutions work and what were seeing in some of this feature visualization work I'm doing. It was really nice trying to explain these concepts out loud, and I arrived at some language around convolutional layers generating a "low resolution, but highly information dense" representation of an input as it passes through the network.

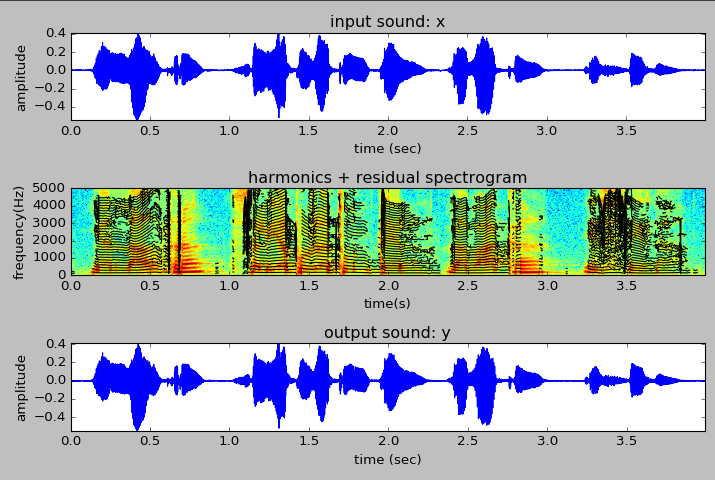

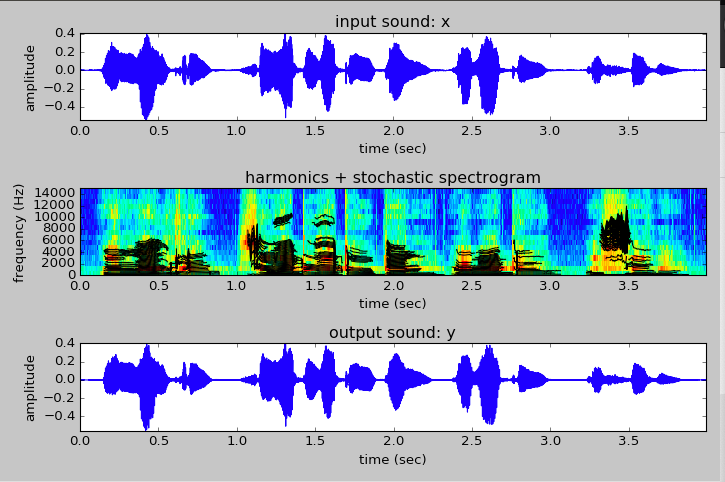

Here are some first attempts at visualizing the activations in this CNN trained on speech digit information (this is for the number 6):

They also were excited by how the library makes possible specific, low-latency, and local-first machine listening, which was always a desire! Not having to be connected to the internet could make the library appealing in settings where one doesn't have reliable access to the internet.

Finally we chatted about how interpretability functionality allows the user of the library to get more direct interpretation of what is going on in the model, especially around more "non-visual" data like sound, verses having to just guess based on what you hear (which could be more unreliable).

Things for next cycle

For next cycle, it will be much more of the same: working on this library, working on ARENA and helping out with Heap.

Continuation

I'm back from my retreat in Puerto Rico, and started to get back into Recurse activities at the end of this cycle.

Day 1

JT retreat

Day 2

JT retreat

Day 3:

Today I worked on ARENA, Heap, and wrote my first Python library with a fellow Recurser.

Things for next cycle

For next cycle I'll be focusing back on my ASR library, getting back into ARENA, and doing work on Heap.

Vacation!

I'm at a retreat for my fellowshp in Puerto Rico! My favorite conversation with a co-fellow was about the future of space engineering.

Day 1

Today I read the Speech-Transformer paper and started a repo for Zora.

Day 2

Today I panted my studio.

Day 3:

Today I checked in about installing a new harddrive on Heap.

Things for next cycle

I'm going to start working on my ASR library Zora next cycle.

Reflection

This was another contemplative, introspective cycle. I think I needed a little distance from ARENA and some time to work leisurely on Audrey, while thinking towards my larger goals for my time at RC, with half of batch to go.

I decided to work on a library called Zora, which will be an automatic speech recognition (ASR) library and platform focused on interpretability, openness, and personalization.

I also had some time to think about my relationship to RC's self directives. In short, I've been feeling the tension between working at the edge of your abilities and building your volitional muscles. I think that sometimes at certain moments, or over long, sustained periods of time, working at the edge of your abilities may not be the thing you necessary want to do, in service of staying at that edge. It often may no longer be grounded in curiosity nor joy. I think I've been motivated mostly through curiosity, but at times not felt joy as much as I would like, especially when working on really hard things. I'm working on re-finding that balance between the two... I think spending some time this cycle on reminding myself of the why is helping re-energize and re-motivate me in the what* that I'm doing.

Day 1

Day 2

Day 3:

Things for next cycle

I'm going to spend some time working while preparing for my upcoming Just Tech retreat to Puerto Rico!

Direction

This cycle was a little direction-less. I'm feeling the slog of ARENA kick in, and I'm trying to think about what I want to do next for my Audrey project / ASR self-study. A bit of a light cycle on work, so not too many updates.

Day 1

I worked on Heap with some Recursers and was able to improve the system with respect to updating ssh keys for users.

Day 2

Today I chipped away more on mechanistic interpretability, with respect to identifying different kind of induction heads and circuits.

Day 3:

Not much was done on this day.

Things for next cycle

For the most part, I just want to continue on with ARENA, ASR self study, and Heap. Also, we'll be welcoming in a new batch!

Transition

This cycle was travel-impacted, as I went to Indiana University to give an artist talk and take part in a roundtable discussion with artists and scholars in support of Blurring the Lines: Art at the Intersection of Human and Artificial Creativity, a group exibition I'm showing work in at IU's Grunwald Gallery.

It was a great experience and I'm really glad I went. Therefore, not much RC work was attended to this cycle. I'm am happy for some of the mental space it did give me, and I'm feeling even more inspired and motivated to continue the work I'm doing :)

Day 1

On Induction Heads

I spent most of the morning learning about Induction Heads and Circuits from these two resources:

Day 2

Today was a travel day for me to Bloomington, Indiana.

Day 3:

I gave a talk and joined a roundtable for the exhibition, and just got home not too long ago.

Things for next cycle

I'll be continuing on with ARENA, and I have some ideas around building an audio ML library, with Audrey being one of the examples :)

Interpretation

This cycle was spent starting mechanistic interpretability, training my automatic speech digit recognizer based on Audrey, and contemplating how to best work on and maintain the Heap machines.

Day 1

On Mechanistic Interpretability

I worked on using TransformerLens to look inside of a pretrained GPT-2 model and begin the practice of mechanistic interpretability!

Day 2

Today I worked a bit more on mechanistic interpretability, but quickly realised that there was a lot more required reading I needed to do in order to get through the next section, so I instead decided to procrastinate reaeding at RC to work on Audrey a bit more. I'm delighted to say I got it training and working! It doesn't generalize well to my voice, so I'm going to go back and make a dataset derived just from my voice, so that my trained model works uniquely for me. I'm realizing that it might be very easy to imagine a world where everyone just has their own personal weights for models, and you could just pipe that into the model, given its archiecture, and have it would exceptionally well for you. I'm going to explore this idea more during the rest of my batch...

Day 3:

Today I took some time to reflect and sift through the large amount of Neel Nanda content out there. As I'm working through this material on mechanistic interpretability and reverse-engineering transformers, I'm trying to organize a sequence of things to read in order to keep up.

These two feel like the first places to start / read:

These are some videos that I think would be next in line to watch:

A Walkthrough of A Mathematical Framework for Transformer Circuits video

A Mathematical Framework for Transformer Circuits paper

A Walkthrough of In-Context Learning and Induction Heads video

In-context Learning and Induction Heads paper

And then these are more around context and open questions in the field - more optional but really help to set the stage and articulate the stakes of working on this problem:

Things for next cycle

For the reset of Chapter 1 in ARENA, we get to choose what to do next, given a set of exercises. I'm interested in superposiiton, so I'm excited to check out resources like this one on Toy Models of Superposition.

I also want to go back and think about transformers a bit more deeply.

I had a really great conversation with two Recursers about audio classification, neural network architectures, and other things. As I'm finishing up this first pass on a simple ASR system, we were thinking about interesting challenges we could work on. One could be solving audido CAPTCHA challenges. As someone who has been on the internet for a very long time, I couldn't believe I had never encountered the audio version of CAPTCHAs before!

I'm wondering if it might be a fun challenge to build a Reinforcement Learning (RL) project that learns to solve these audio CAPTCHA challenges....something to think about for the second half of my batch when we get to RL with ARENA.

Some thing I want to do for Audrey include:

Along with using my own voice, I'd like to spend some time looking at this Audio MNIST dataset.

I'm also thinking about the architecture I should choose in building my transformer-based ASR system. It seems like the Conformer is what I'm looking for. Some resources include:

There are other, non-CNN architechtures as well:

Transformation

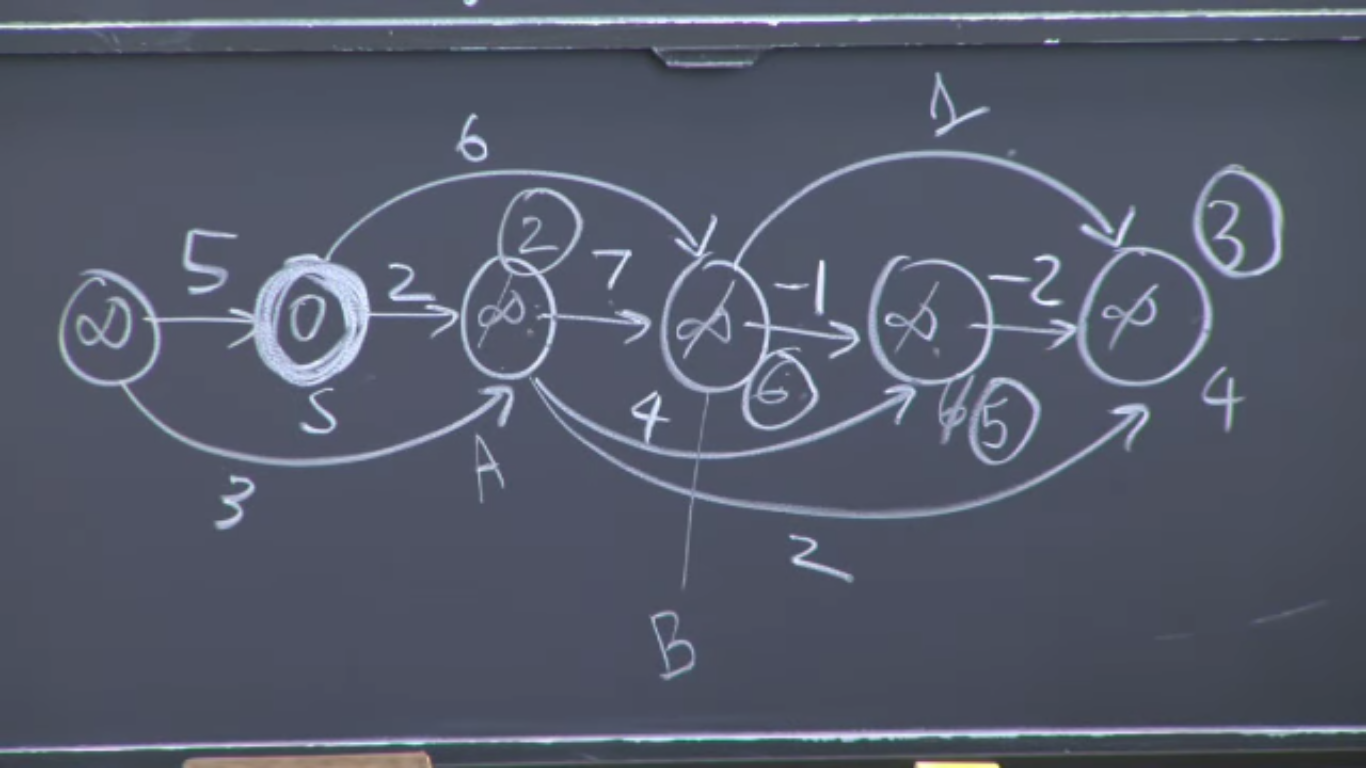

This cycle was a lot of pairing on transformers. For example we implemented a Transformer Block like the one diagramed above.

Day 1

I was offline doing non-RC things :)

Day 2

Building and Training Transformers

I paired today on building an implementation of GPT-2 from scratch!

Stanford CS25: V2 I Introduction to Transformers w/ Andrej Karpathy

Day 3:

Following up from yesterday, I did more pairing on transformers, this time learning different methods for sampling from a pretrained GPT-2.

How to generate text: using different decoding methods for language generation with Transformers

I also worked more on Audrey! I'm hoping to present something around Week 7.

Data Augmentation Techniques for Audio Data in Python

Things for next cycle

I want to focus on ARENA, Audrey, and helping support Heap!

Automatic Speech Recognition with Transformer

Things to research

Optimization, Propagation, Variation, Generation, Discrimination

Day 1

I'm still super happy about what I learned about CNNs and ResNets last week!

Here's another short explainer on how ResNets work.

I finished the optimization section in ARENA and started moving into backpropogation.

I learned a lot of new things, including:

On Optimization

I also spent a bit more time workin on Heap, and realize that I need to learn more about Ansible and how it works in order to be better and making improvements/updates to Heap in the future.

On Backpropagation

After finishing the backpropagation section in ARENA, I started pairing with a fellow Recurser on generating a synthetic speech dataset that contains speakers saying the numbers 0-9, as a way to start building a simple audio speech classifier from scratch.

Some resources:

Day 2

On VAEs and GANs

Today we started learning to build and train Variational Autoencoders (VAEs) and Generative Adversarial Networks (GANs)

I started learning about building and training GANs today, and will have to finish that up on the first day of my next cycle.

Day 3:

Things for next cycle

I have a small bit of work on GANs to finish at the top of the week, which I'm looking forward to getting past, because...

Next we we will learn to build Transformers and start getting into mechanistic interpretability! I've been preloading my brain with a lot of resources on the topic, linked below. Really looking forward to getting my mind blown next week :)

On AI Safety

On Mechanistic Interpretability

Normalization

This cycle was mostly spent with ARENA and NNFS. I've been spending a lot of time thinking about what to do post-ARENA, which lead me to think a lot about why I'm even doing ARENA in the first place. I dug around a lot more into AI safety, and I think I'm motivated by interpretability and observability of models. I'm interested how some of this work will translate to voice based models and agents, as that really feels like an under-explored area that I would have a lot of fun figuring out with opportunities to make novel contributions.

Day 1

I built an implementation of ResNet34 from scratch, and it was super exciting to have it all come together.

I also just had tons of fun debugging the network, seeing how many parameters it contained, and delightfully moving through the code in order to get it to work correctly. I found a lot of joy in that kind of low-level ML engineering, and could see myself having lots of fun doing that kind of work professionally.

Here is what my ResNet looked like with some layers missing

I had a really subtle bug where I was returning a list to my BlockGroup class that didn't match the size of other params that I was zipping up in order to determine the number of layers in my BlockGroup.

After a bit of debugging I realized that my in_featers_per_group was missing two entries. It turns out that I was returning just the first two elements of the list by saying self.out features per group[:1], but I just wanted to get a list without the last element, in which case I meant to write [:-1] instead of [:1]. So subtle yet so crucial!

I made the fix and got the right size so that my zip function would create four BlockGroup objects instead of just two.

And here's what it looked like once I properly got those layers networked together.

After finishing this, I started to realize that I now have enough skills to start building my own speech classifier, and that would be a really fun and exciting thing to work on as we finish up all of these pre-requisite, foundational excercises for AREA.

Day 2

Today I started working on the Optimizers chapter in AREA, and also started watching videos related to AI safety, just to get a better sense of the field.

I also made some much-needed improvements to Macaw, including adding paper authors to the show description.

Day 3:

Today I worked more on Optimizers and paired with a Recurser on Ansible setup on Heap :)

Things for next cycle

Convolution

Another cycle that was motly focused on ARENA plus working through the Neural Networks from Scratch book.

Day 1

Today was Impossible Day at RC, so I started by finishing Chapter 5 in NNFS and then worked through translating this tutorial on building an ASR system with a Transformer model from Keras to PyTorch. I got it mostly done, but wasn't able to get it running on Heap due to some issues with Heap's Python environment. Something to work through on another day :)

A fellow Recurser also presented on FFTs and showed off their guitar tuner written in C!

Day 2

Today was the start of working on Convolutional Neural Networks

And then I wrapped up finishing Chapter 6 in NNFS

Day 3:

This morning a fellow Recurser introduced me to Piper, a text-to-speech program that can run on a Raspberry Pi. Something to look into and play with!

In our ARENA check-in call, we talked about the following projects:

Today I continued working on CNNs and encounted the followint topics:

Things for next cycle

Transposition

This cycle was consumed by ARENA, plus work from the Neural Networks from Scratch book/videos. I did a lot of work with tensors, einops, linear algebra, and manipulating matrices. I'm still trying to build up an intuitive feeling for thinking and programming in this way, so I'm taking every mistep as a sign that I'm really working at the edge of my abilities - whenever I have to learn something new, I take it as evidence of that fact. And I learned a lot of new things.

Day 1

Today I started the exercises for ray tracing

I was also able to pair with another Recurse and get onto the GPU machines on Heap :)

Day 2

I worked through Neural Networks from Scratch (NNFS) Chapters 1 and 2

I also learned more about PyTorch for manipulating tensors, matricies, vectors, arrays...

vs.

Day 3:

Today I finished the ray tracing exercises, with some help from an AI assistant (I use Claude Sonnet 3.5). The exercises actually recommended we do that, and it was nice to work with something (I almost wrote someone) that I can share ideas and approaches with, confirm my intuition, and help lead me to the right implementation. This was super helpful, because these last exercises were pretty chanllenging for me. We dealt with 2D rays and seeing if they interset with triangles. All of this was meant to help build up to a function that rendered a mesh of Pikachu.

Some more PyTorch functions that I came across:

I also came up against broadcasting, which I need to spend more time internalizing.

Lastly I finished NNFS Ch 3

Things for next cycle

Submersion

This cycle was a bit intense, and I feel less like I'm immersing myself in ML/AI studies and instead I'm fully submerging myself into it, like diving into a deep, wide ocean with no intention of swimming back up to the surface. A lot happened this cycle, but strangely I don't have too much to write about it for the time being. I spent the first two days of this cycle moving through online videos on linear algebra, and all of my notes are in my notebook for now. Which meant I did very little coding. But on the last day of this cycle, I did get around to some coding, through the lens of learning about einops. More details below...

Day 1

I watched 3Brown1Blue's Essence of Linear Algebra videos and took copious notes.

Day 2

At the Audio HangShared my Speech Emotion Recognition notebook in the Audio Hang group, and got into a conversation about audio signal feature extraction and clustering, especially around mel-frequency cepstral coefficients. We also talked a lot concatenative synthesis and granular synthesis, and another Recurse showed off one that they built from scratch in Rust and JS :)

Later on in the day I finished all of the Essense of Linear Algebra videos :) As I get deeper into Linear Algebra, I'd love to check out this book: Linear Algebra Done Right.

Day 3:

For the AI ML Paper Cuts study group, we read the now-classic Attention is All You Need which popularized both self-attention mechanisms and transformer models. I watched a couple of videos to help complement the paper, including Illustrated Guide to Transformers Neural Network: A step by step explanation and Attention Is All You Need over by Yannic Kilcher.A lot of it was over my head, but I was happy to expose myself to it this early, and at the very least I could follow and understand the implications of the paper. I'll be excited to revisit this paper once I have a firmer understadning of the fundamentals that lead up to it. In particular, there was some discussion on understanding some of the transformer's sublayers a bit more in depth.

It was mentioned in the call to check out 3Blue1Brown's videos on Neural Networks to get some basics on neural networks in an intuitive, visual way. This annotated version of the Transformer paper also seems like a great resource, especially for its visualizations of positional encodings.

Next week we are scheduled to read Learning Transferable Visual Models From Natural Language Supervision, which introduces Contrastive Language-Image Pre-training. I'm super interested in learning about this idea because it lead to Contrastive Language-Audio Pre-training, which makes possible text-to-audio systems introduced in the analogous paper CLAP: Learning Audio Concepts From Natural Language Supervision. So while I have a lot of things going on already, it would be nice to try to keep up with that paper because of its implications to other areas that I'm currently interested in with respect to audio machine learning.

In the afternoon I worked through an ARENA pre-requisite on einops, a Python library for tensor manipulaton that prioritizes readability and explicitness. It is based on Einstein notation I worked through the einops basics and started to develop an intuitive feel for how the library works, compared to NumPy or PyTorch. This intro video to einops was also very helpful for getting a fuller sense of its usefulness. Some other resources I came across include this article on einops in 30 seconds (早い!), this Reddit post on how to read and understand einops expressions, and two resources sharing a similar, catchy title: Einsum is All you Need - Einstein Summation in Deep Learning and Einsum Is All You Need: NumPy, PyTorch and TensorFlow.

As a way to encapsulate all of the ML/AI self-study I'll be doing (and have been doing), I created a monorepo to contain all of the topics that I'll be learning as a one-stop-shop for all things related to ML/AI.

Things for next cycle

Matrices

class Solution(object):

def setZeroes(self, matrix):

"""

:type matrix: List[List[int]]

:rtype: None Do not return anything, modify matrix in-place instead.

"""

list_of_zeros = []

width = len(matrix)

height = len(matrix[0])

# find the 0s in the matrix

for x in range(0, width):

for y in range(0, height):

if matrix[x][y] == 0:

# what should we do when we find a 0?

# naive approach, store loc in a list, and then once we've scanned the matrix, go back and set the rows/cols for that location to 0

list_of_zeros.append([x, y])

for loc_of_zero in list_of_zeros:

def set_row_col_zero(loc):

x = loc[0]

y = loc[1]

# set row to zero

for r in range(0, width):

matrix[r][y] = 0

# set col to zero

for c in range(0, height):

matrix[x][c] = 0

set_row_col_zero(loc_of_zero)

Attenuation

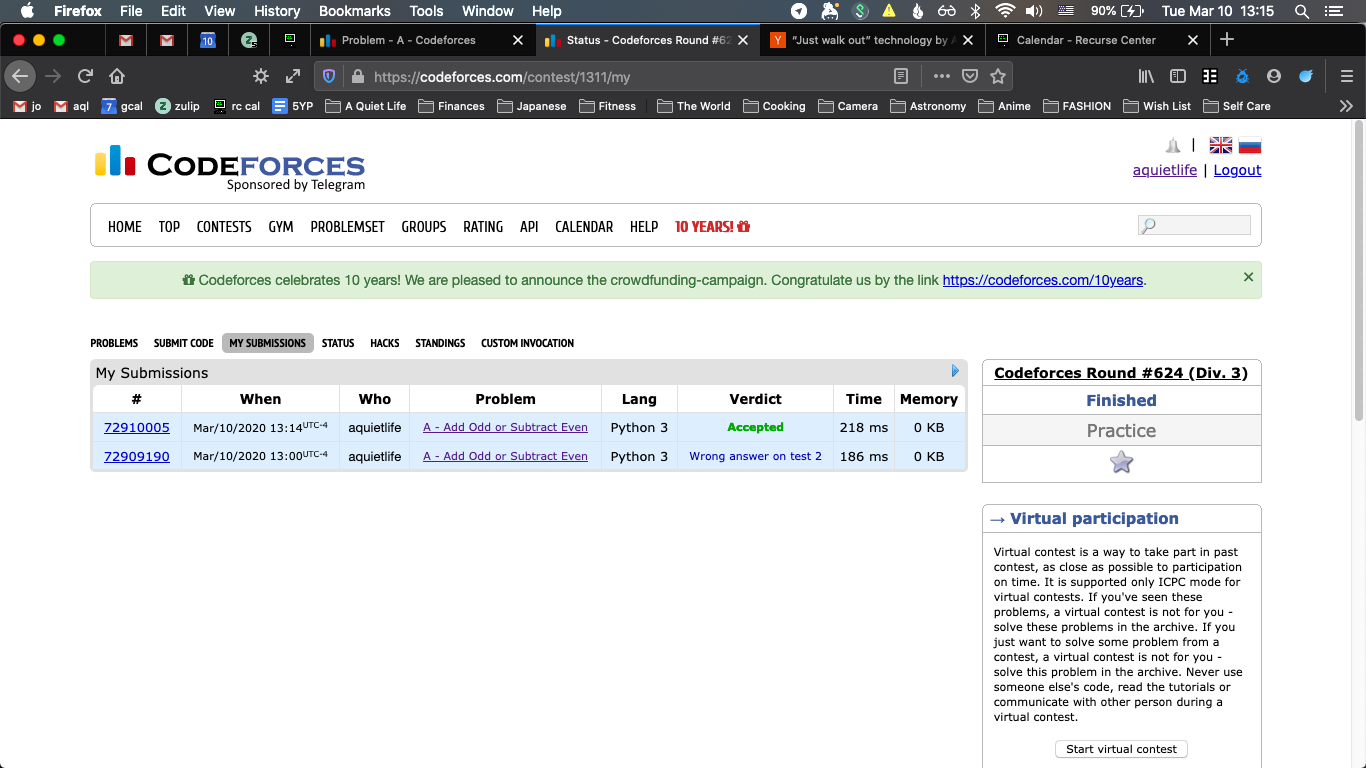

Day 1